Abstract

The aim of this study was to improve the low accuracy of equipment spare parts requirement predicting, which affects the quality and efficiency of maintenance support, based on the summary and analysis of the existing spare parts requirement predicting research. This article introduces the current latest popular long short-term memory (LSTM) algorithm which has the best effect on time series data processing to equipment spare parts requirement predicting, according to the time series characteristics of spare parts consumption data. A method for predicting the requirement for maintenance spare parts based on the LSTM recurrent neural network is proposed, and the network structure is designed in detail, the realization of network training and network prediction is given. The advantages of particle swarm algorithm are introduced to optimize the network parameters, and actual data of three types of equipment spare parts consumption are used for experiments. The performance comparison of predictive models such as BP neural network, generalized regression neural network, wavelet neural network, and squeeze-and-excitation network prove that the new method is effective and provides an effective method for scientifically predicting the requirement for maintenance spare parts and improving the quality of equipment maintenance.

1 Introduction

Equipment are the basis for the improvement of army combat effectiveness. It is necessary for the equipment to be repaired in time to maintain and restore the technical performance. On the one hand, the upgrading of military equipment is accelerating, the level of technology and integration of equipment are constantly improving, and the difficulty of repairing the equipment is increasing; on the other hand, the preparations for combat are accelerating and the use of equipment is frequent. The timeliness of maintenance has become higher and higher. Replacement repair has gradually become the main maintenance method of the grassroots maintenance organizations. To a certain extent, the timely and sufficient supply of equipment spare parts directly determine the maintenance capability of the grassroots maintenance organizations. Requirement prediction is the prerequisite, and foundation of spare parts support work and its role is prominent, attracting more and more research attention now.

The main content of the maintenance spare parts requirement prediction includes the time, type, and quantity. According to the basic relationship of maintenance and supply, the requirement of spare parts should be predicted based on maintenance. Equipment maintenance can be divided into preventive maintenance and corrective maintenance according to the source of the task. Preventive maintenance is to maintain equipment performance according to the corresponding standards of the use time and mileage of the equipment. On reaching a certain threshold, the equipment will be inspected, tested, and replaced by parts, no matter whether it is faulty. According to the scope and degree, preventive maintenance can be divided into major repairs, intermediate repairs, and minor repairs. Corrective maintenance is a maintenance carried out to resume the performance of the equipment after fault. The maintenance time for preventive maintenance can be calculated according to the equipment utilization plan. The content of the maintenance items is also clear in accordance with the standard, so it is easier to predict the requirement of the spare parts. Corrective maintenance is triggered by the natural failure of the equipment. As the time, type, and degree of the failure during the use of equipment are very uncertain, and the characteristics and rules are not obvious, the prediction of the spare parts requirement is very difficult. Current research is also focused on this.

There are three main methods for the research of spare parts requirement prediction, which are empirical analysis method, analytical function method, and data regression prediction method. The empirical analysis method is that experts predict the spare parts requirement of the equipment in the future based on the previous spare parts demand of the same or similar equipment, relying on maintenance experience. In ref. [1], experts’ forecasts with Markov forecasts were combined and a method of spare parts requirement prediction in wartime was proposed based on fuzzy inference. The analytic function method is to describe and calculate the spare parts requirement by using the analytical function when the life distribution probability of the spare parts is known. In ref. [2], an age replacement strategy and requirement calculation model were proposed for the Weibull distributed equipment spare parts. In ref. [3], the requirement prediction model of special spare parts and shared spare parts were constructed, respectively, on the basic assumption that the daily failure obeyed the exponential distribution and the battle damage-oriented obeyed the binomial distribution. In ref. [4], a prediction model combined with the first, second, and third exponential smoothing methods and three and four term moving average methods were established, making prediction model more accurate. The data regression method is to study and extract the characteristic law of spare parts consumption to predict the requirement of spare parts in the future, by analyzing the consumption data of equipment spare parts in the past period of time. The empirical analysis method has poor stability due to different levels of expert ability. The analytical function method requires the life probability distribution of the spare parts, making its condition and scope restricted [5], and is unsuitable for the grassroots maintenance organization, of which the spare parts reserve is small and the turnover speed is poor. The data regression prediction method has a wide range, such as BP neural network [6], logistic regression [7], support vector machine [8], gray model [9], gray Markov model [10], Bayesian [11], etc.

Artificial neural network has outstanding advantages in the research of spare parts requirement prediction due to its independent learning, associative storage, and super-optimization ability. There are many branches in the application of neural networks for spare parts requirement prediction, such as BP neural network [12], generalized regression neural network [13], radial basis neural network [14], grey neural network [15], etc. In order to solve the problems of slow convergence speed and weak global search ability in artificial neural network prediction, some scholars have proposed a composite method combining other intelligent algorithms. In ref. [16], the genetic algorithm was used. In ref. [17], the particle swarm algorithm was used, while in ref. [18], the ant colony algorithm was used to optimize the artificial neural network, in order to improve the speed and accuracy of spare parts requirement prediction. The above research is not very pertinent to the time series characteristics of spare parts requirement data, and does not make good use of the relationship between the data at different time points, thus affecting the predicting effect. According to the time series data of equipment maintenance spare parts consumption, this article proposes a prediction method based on long short-term memory (LSTM) recurrent neural network, which effectively utilizes long and short distance time series information to enhance the accuracy of spare parts requirement prediction.

2 Related theories of LSTM

LSTM recurrent neural network [19], jointly proposed by Hochreiter and Schmidhuber in 1997, is aimed to solve the problem of gradient disappearance, gradient explosion, and insufficient long-term memory ability when recurrent neural network analyzes and processes time series data. It is a new type of neural network model which is the latest and most popular neural network model specially designed for analyzing and processing time series information.

2.1 Net structure

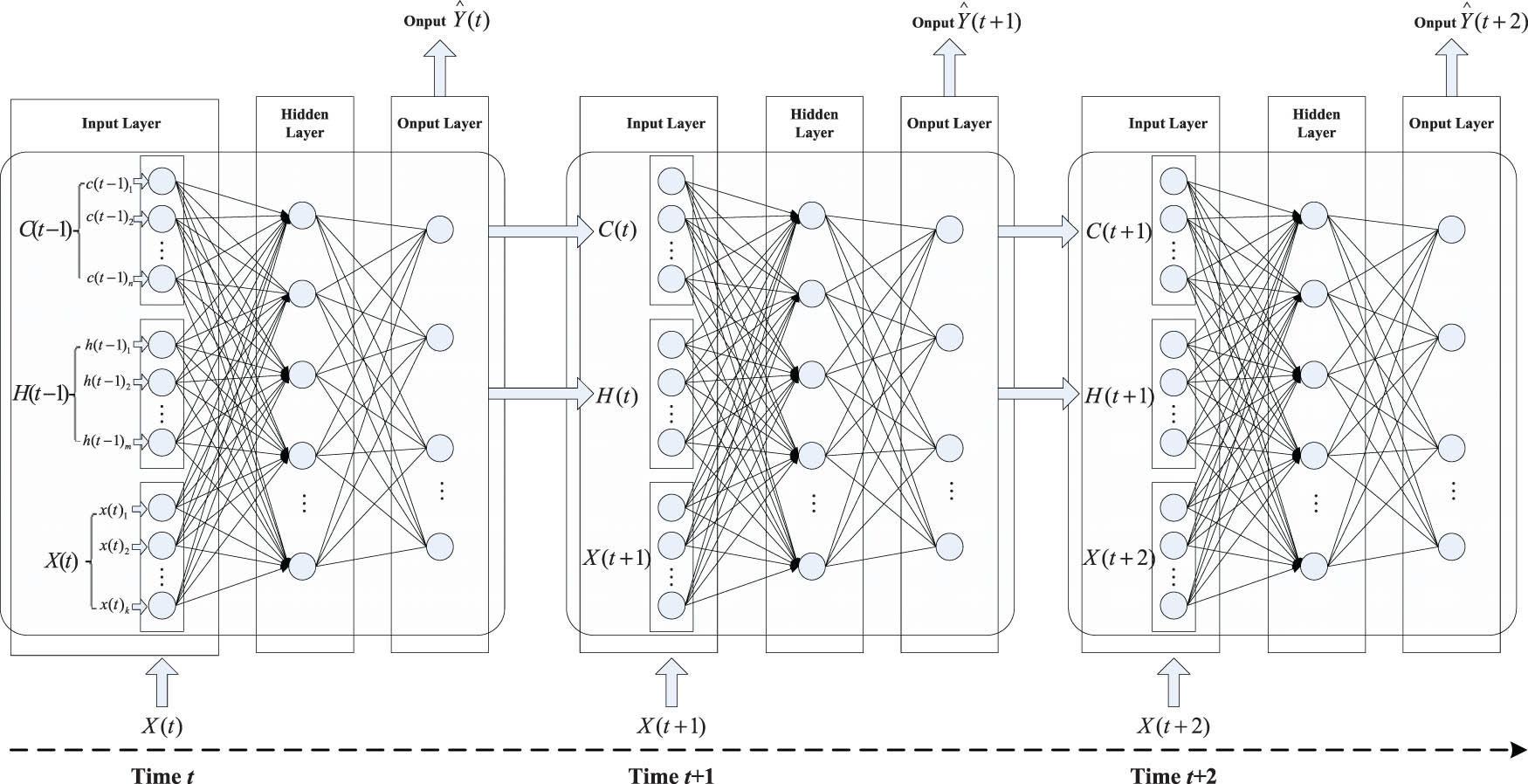

LSTM is a chain-like neural network with repeated network modules. As shown in Figure 1, each module includes a three-layer network structure, including an input layer, a hidden layer, and an output layer. Each layer of the network is composed of a different number of parallel neural units. The input layer is responsible for receiving information. The component data in the current time data information

Logical structure diagram of LSTM recurrent neural network.

2.2 Neural unit design and memory realization

The core idea of LSTM is the design of adding unit state

LSTM recurrent neural network hidden layer neural unit structure diagram.

2.2.1 Forgotten gate

The calculation formula of the forget gate is:

where

The output value

2.2.2 Input gate

The calculation formula for the input gate is

where

2.2.3 Output gate

The calculation formula for the output gate is

where

2.2.4 Network timing output

The network timing output is the predicted value of the LSTM recurrent neural network. The sequence output calculation formula in the neural unit is:

where

3 Improved LSTM algorithm

As LSTM recurrent neural network is very effective in processing time series data [21], it is currently widely used in handwriting recognition, speech recognition, machine translation, video analysis, and other fields. However, the current application research of LSTM recurrent neural network in the prediction of equipment spare parts requirement is still not seen. After the equipment is repaired and replaced with spare parts, its technical performance will be improved, and the probability of the same failure will be greatly reduced. Therefore, the consumption of spare parts at the previous moment will have a great impact on the consumption of spare parts at the later time. The spare parts consumption data is a typical time series data, and the LSTM recurrent neural network is very suitable for the prediction of spare parts requirement in theory.

3.1 Algorithm procedure

According to the characteristics of the time series data with equipment spare parts consumption, this article proposes to use LSTM recurrent neural network to predict the requirement of equipment spare parts, in which the technical advantages of LSTM recurrent network will be fully performed. The detailed design of network structure, and the concrete realization of network training and network prediction are shown.

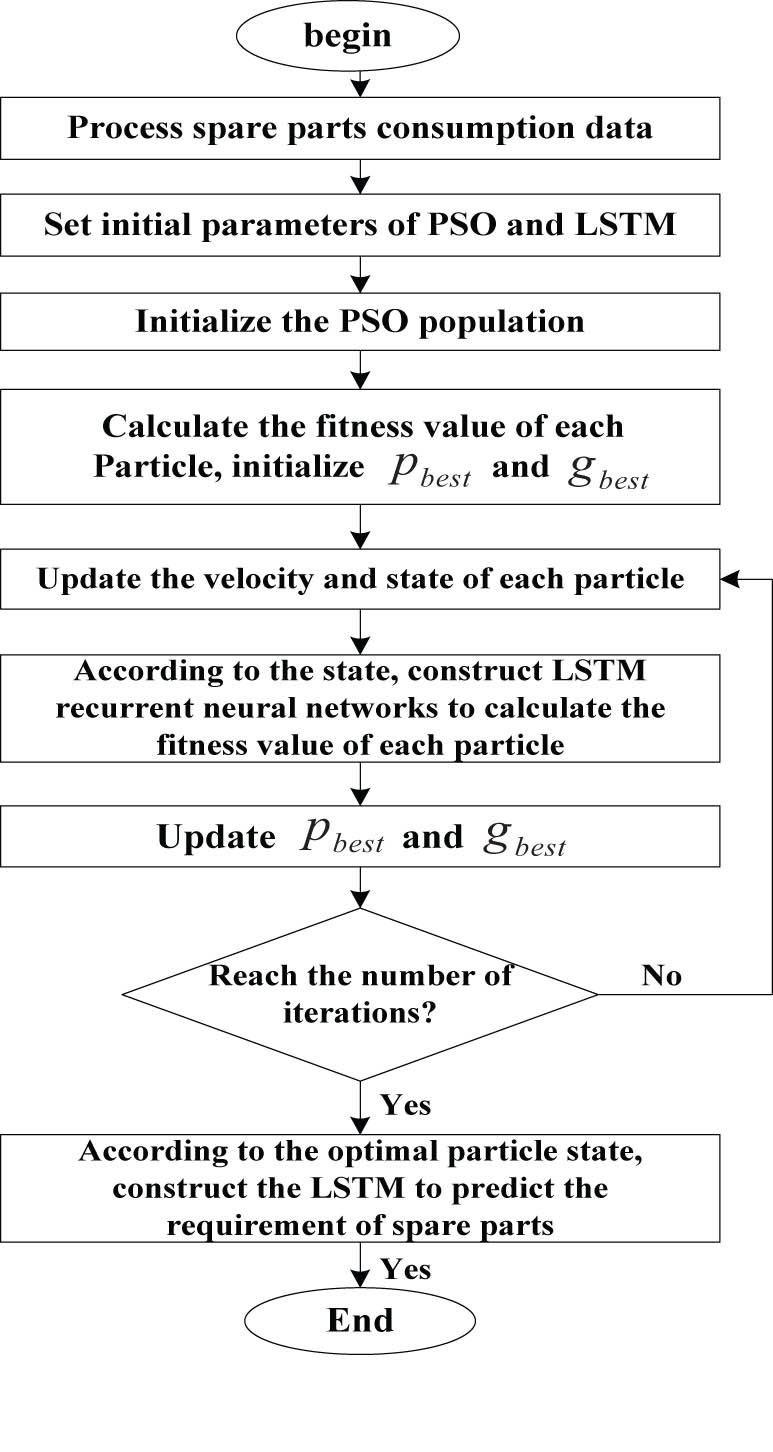

In order to solve the problem that the LSTM recurrent neural network prediction model involves many parameters, which will make the performance of prediction unstable if set artificially, with the goal of minimizing the prediction error, an algorithm of equipment spare part requirement prediction with LSTM recurrent neural network whose parameters are optimized by particle swarm optimization is further proposed. The structural framework of the algorithm is shown in Figure 3.

PSO optimizes the LSTM to predict the spare parts requirement.

The specific steps are as follows:

Step 1: Standardize the experimental data and divide it into a training set and a testing set.

Step 2: Set particle swarm algorithm parameter learning factors

Step 3: To each particle, construct an LSTM recurrent neural network according to its four-state vector values, use the training set data to train the network, use the testing set data to test the network, and use the mean square error as the fitness value of each particle.

Step 4: Determine the extreme value

Step 5: According to

Step 6: Calculate the fitness value of each particle in the population, and update

Step 7: Repeat steps 4 to 6, and after reaching the number of iterations, use the four-state vectors of particle

3.2 Data processing and network construction

The spare parts consumption data is the time series data

The standardized data set

As the requirement for m kinds of spare parts is predicted based on the consumption data of m kinds of spare parts, the number of neural units in the input layer is m, and the number of neural units in the output layer is also m. The number of hidden layer neural units is

3.3 Network training

Set the network training parameters, the number of training cycles MaxEpochs, the number of training data subsets MiniBatchSize, the prediction interval

According to the loss function value

3.4 Network prediction

To test the effectiveness of the network, the trained LSTM recurrent neural network and the testing set data

3.5 Parameter optimization

The LSTM recurrent neural network uses the training set data to train the network, optimizes, and adjusts the weight parameters and bias vectors of the input layer, hidden layer, and output layer to obtain a network structure suitable for the prediction object. After the network training is completed, use the testing set data to test the network prediction effect. In the procedure of LSTM recurrent neural network prediction and training, there are four key parameters that affect the quality of the algorithm, which are the number of hidden layer neural units

4 Calculation case

4.1 Experimental data and parameter settings

This article takes 48 months consumption data of three main types of maintenance spare parts of a maintenance organization as an example to show the procedure of prediction. The consumption of three types of spare parts is shown in Figure 4.

Consumption of three types of spare parts in a maintenance organization.

The particle swarm algorithm is used to optimize the LSTM recurrent neural network to predict the requirement of equipment maintenance spare parts. The main initial parameter settings of the algorithm are shown in Table 1. Among them, in order to reduce the calculation time, the network training times is set as 100 when using the particle swarm to optimize the LSTM recurrent network parameters, and is set as 300 to enhance the prediction accuracy when using the optimal parameters to construct the network for prediction.

Algorithm parameter settings

| Parameter | Value | |

|---|---|---|

| Particle swarm | Learning factor

|

0.5, 0.5 |

| Maximum inertia weight

|

3, 0.2 | |

| Number of iterations

|

100 | |

| Number of populations

|

100 | |

| LSTM | Number of neural units in input layer

|

3 |

| Number of neural units in output layer

|

3 | |

| Number of training cycles MaxEpochs (optimization procedure) | 100 | |

| Number of training cycles MaxEpochs (prediction procedure) | 300 | |

| Months of training set | 36 | |

| Months of testing set | 12 |

4.2 Simulation result analysis

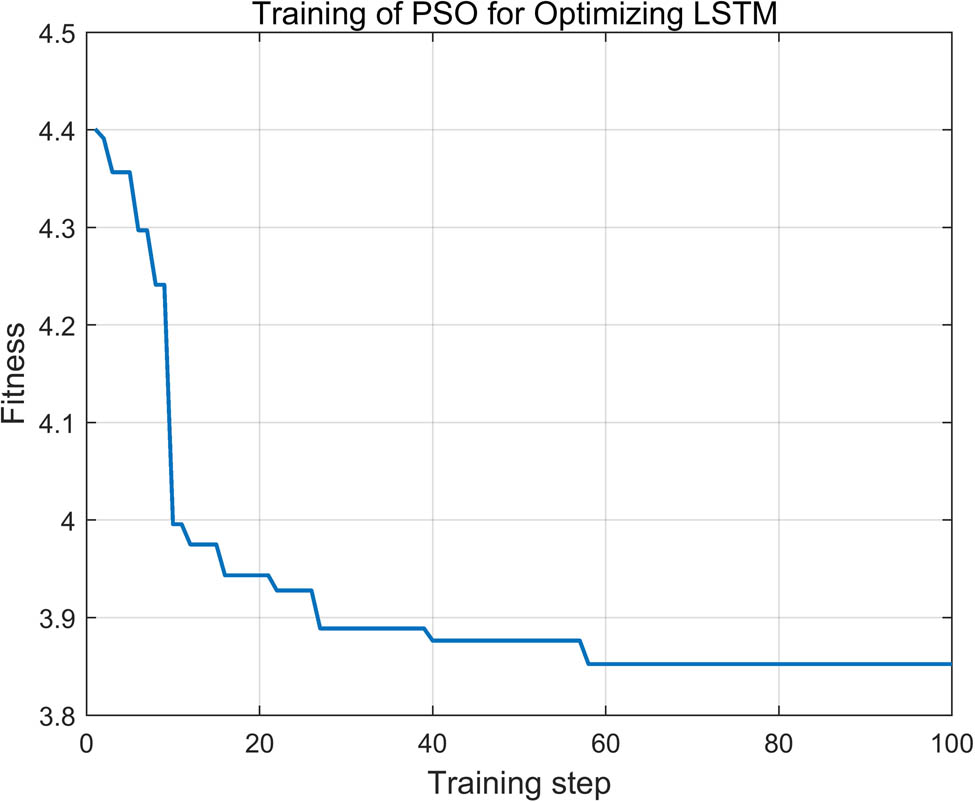

With the minimum mean square error as the target, the particle swarm algorithm is used to perform 100 iterative calculations, in order to optimize the four key parameters of the LSTM recurrent neural network. The training process is shown in Figure 5. It can be seen that the algorithm converges after 58 iterations, and the optimal mean square error is 3.8524. The four key parameters of the LSTM recurrent neural network are preferably:

The training process of PSO optimizing the key parameters of LSTM.

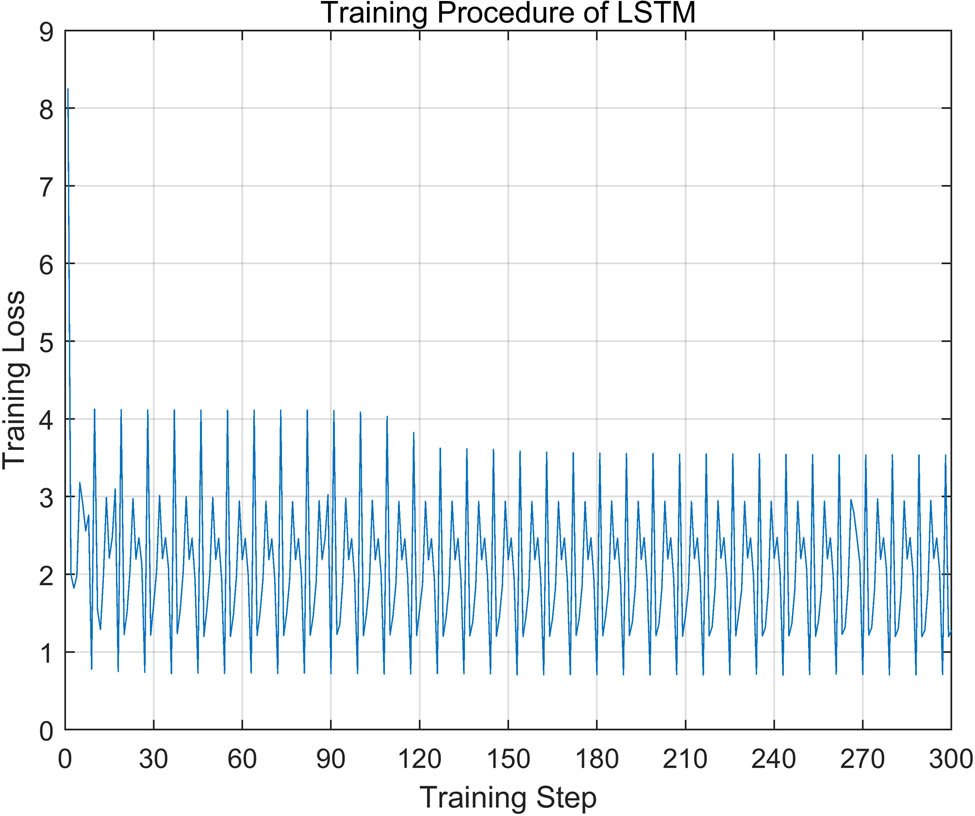

The LSTM recurrent neural network is constructed using the four optimal parameters, and the network is trained 1,000 times with 36 months consumption data of three types of spare parts. The values of the loss function in the training process are shown in Figure 6.

Loss function values in LSTM recurrent neural network training.

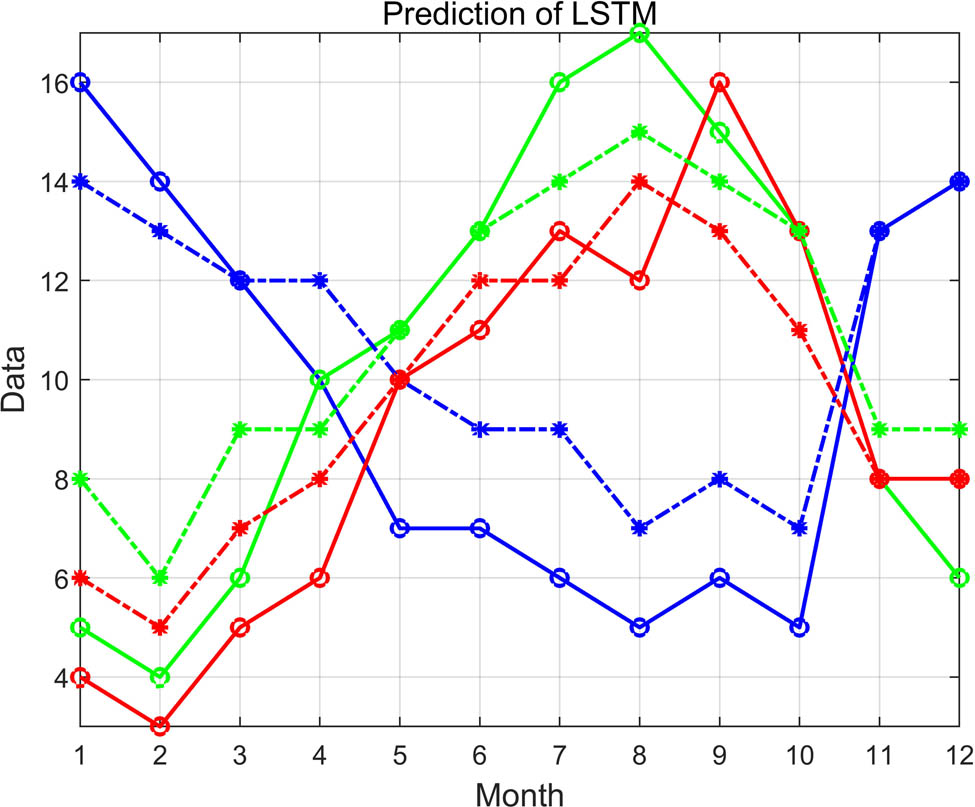

It can be seen that the convergence of the algorithm is good, and the loss function value is low. Using 12 months consumption data to test the trained network, the comparison between the predicted value and the actual consumption value is shown in Figure 8, and the mean square error is 3.3611. The error of the spare parts prediction in August is relatively large because the equipment was suddenly used in large quantities during that period, which was inconsistent with the previous two years.

Comparison of LSTM.

Comparison of BP neural network.

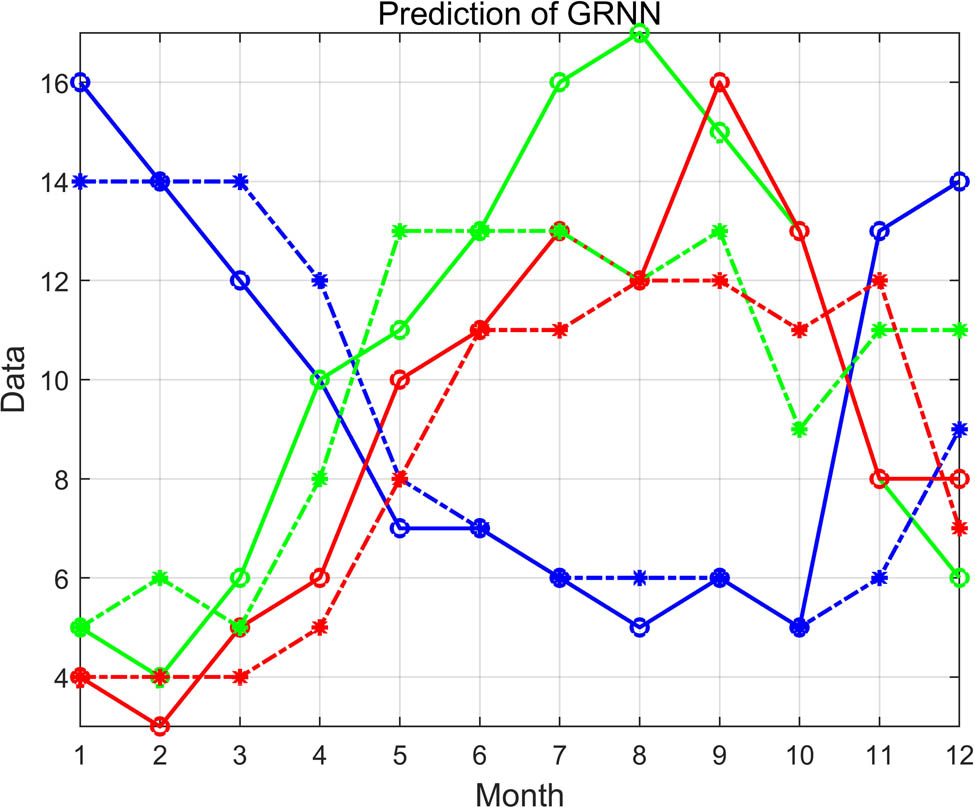

Comparison of GRNN.

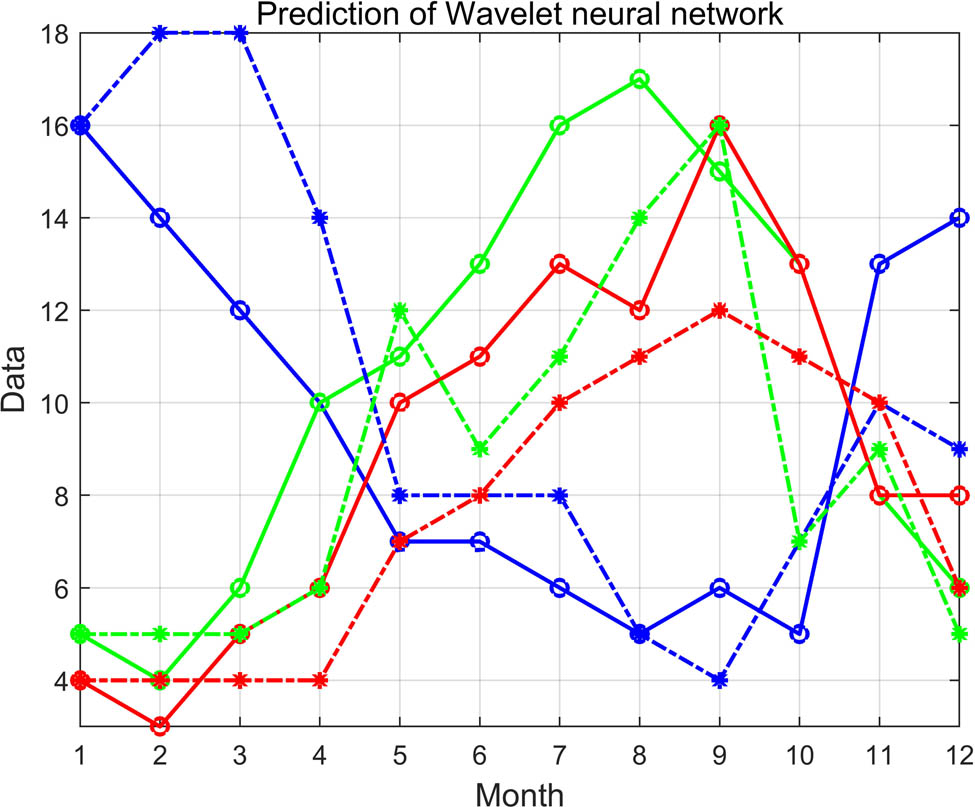

Comparison of wavelet neural network.

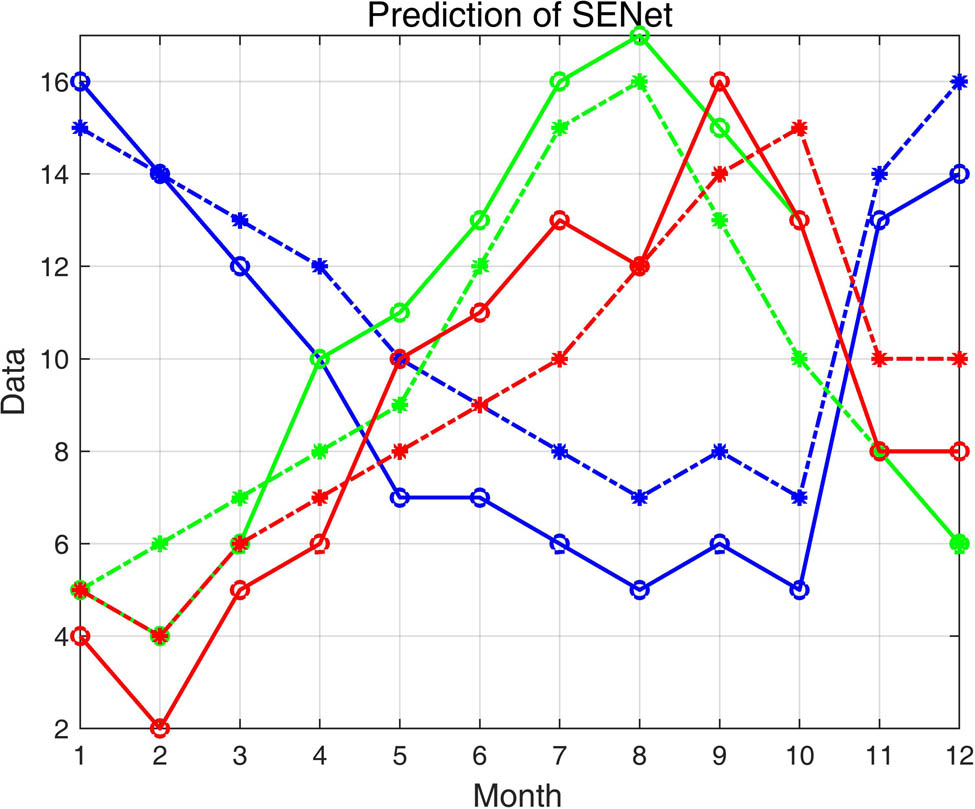

In order to verify the predominant prediction performance of the LSTM recurrent neural network, comparative experiments are performed. Four widely used neural networks currently, BP neural network, generalized regression (GRNN) neural network, wavelet neural network, and squeeze-and-excitation networks (SENet) are used to predict the requirement of spare parts too, using the same training set and testing set. After 1,000 times of network training, 12 months requirement for 3 types of maintenance spare parts was predicted, and the comparison between the 3 kinds of neural network prediction values and actual consumption values were obtained, as shown in Figures 9–12. The mean square errors of the four neural networks are shown in Table 2. From the comparison of the predicted value and the actual consumption value curve and the mean square error value, it can be seen that the LSTM recurrent neural network has the highest prediction accuracy and has obvious advantages in predicting the requirement of maintenance spare parts with time series characteristics.

Comparison of SENet.

Comparison of the mean square error of four kinds of neural network

| LSTM | BP | GRNN | Wavelet | SENet |

|---|---|---|---|---|

| 3.3611 | 8.4722 | 6.5833 | 7.9444 | 4.5812 |

5 Conclusion

This article summarized the important significance and current research situation of equipment maintenance spare parts requirement prediction, analyzed the time series characteristics of equipment maintenance spare parts consumption data, and the principle and advantages of LSTM algorithm processing time series data, and proposed an equipment maintenance spare parts prediction method based on LSTM recurrent neural network. The procedure of algorithm implementation was designed. The methods of data standardization and application classification, the key issues of network training and network prediction as well as the construction procedures of network input layer, hidden layer, and output layer were expounded. It focused on the method of applying particle swarm algorithm to optimize the four important parameters of the LSTM recurrent neural network, including the number of hidden layer neural units, the prediction interval, the number of training data subsets, and the initial learning rate. Finally, the algorithm was implemented using Matlab programming. The algorithm was verified using 48 months consumption data of 3 types of maintenance spare parts of a certain unit, and the performance of the BP neural network, GRNN, wavelet neural network, and SENet were compared to verify the reliability and accuracy of the algorithm. The limitation of the method is that the complexity is relatively high, the time-consumption is relatively long, and it needs a lot of data to support training. In the next step, it is necessary to further collect long-term and large-scale maintenance spare parts consumption data, and to improve the algorithm experimentally.

-

Funding information: The authors state no funding involved.

-

Author contributions: All authors have accepted responsibility for the entire content of this manuscript and approved its submission.

-

Conflict of interest: The authors state no conflict of interest.

References

[1] Liu X, Zhu L, Zhang W. Demand forecasting of fuzzy inference-based wartime spares. Acta Armamentarii. 2013;34(9):1197–200.Search in Google Scholar

[2] Wang Y, Ran H, Ren W. A computing method of weibull distribution spare parts demand based on age replacement policy. Fire Control Comm Control. 2017;42(3):64–6.Search in Google Scholar

[3] Kovacs K, Ansari F, Sihn W. A modified Weibull model for service life prediction and spare parts forecast in heat treatment industry. Proc Manuf. 2021;54(7):172–7.10.1016/j.promfg.2021.07.026Search in Google Scholar

[4] Lin C, Feng G, Zishuo W. An optimal combination prediction method of turnover spare parts consumption based on certain weight. J Phys Conf Ser. 2021;1955(1):1–8.10.1088/1742-6596/1955/1/012122Search in Google Scholar

[5] Zhang JX, Si XS, Du DB, Hu CH, Hu C. A novel iterative approach of lifetime estimation for standby systems with deteriorating spare parts. Reliab Eng Syst Saf. 2020;201:1–13.10.1016/j.ress.2020.106960Search in Google Scholar

[6] Chen FL, Chen YC, Kuo JY. Applying moving back-propagation neural network and moving fuzzy neuron network to predict the requirement of critical spare parts. Expert Syst Appl. 2010;37(6):6695–704.10.1016/j.eswa.2010.04.037Search in Google Scholar

[7] Xu S, Zhang H, Nie T, Wang H. Forecasting for materials with intermittent demand based on combined forecasting. Syst Eng Electron. 2012;34(1):111–4.Search in Google Scholar

[8] Yu L, Yang ZB, Tang L. Prediction-based multi-objective optimization for oil purchasing and distribution with the NSGA-II algorithm. Int J Inf Technol Decis Mak. 2016;15(2):423–51.10.1142/S0219622016500097Search in Google Scholar

[9] Mei G, Zhong B, Zhang X, Zhao Z. Combination forecasting model of equipment spare parts demand based on IOWA operator. Ordnance Ind Autom. 2013;32(1):8–11.Search in Google Scholar

[10] Yu F, Li C, Zhang W. Research on the optimal order time of repairable spare parts of aviation. Mater Mech Eng Autom. 2016;198(5):28–30.10.3901/JME.2016.15.028Search in Google Scholar

[11] Liu W, Zhang Y, Dang L. A new prediction model of equipment stock based on gray-Bayesian. Ordnance Ind Autom. 2010;29(4):48–51.Search in Google Scholar

[12] Dou Y, Wang S. Research on spare part requirement prediction of field drill based on BP NN. Ordnance Ind Autom. 2010;29(3):33–4+37.Search in Google Scholar

[13] Luo Y, Xu K. Demand forecast of equipment spare parts based on generalized regression neural network. Precise Manuf Autom. 2014;2:37–8+46.Search in Google Scholar

[14] Guan Z, Chang W. Prediction method of aviation spare parts based on PCA-RBF neural network model. J Beijing Technol Bus Univ (Nat Sci Ed). 2009;27(3):60–4.Search in Google Scholar

[15] Lu Q, Bai M, Peng Y, Zhang W. Armored equipment requirement forecasting based on grey neural network. J Acad Armored Force Eng. 2011;25(6):19–22.Search in Google Scholar

[16] Liu G, Zhong X, Dong X. Research on warship electronic equipments spare parts optimize model based on genetic algorithm and neural network. Ship Sci Technol. 2008;30(5):138–42.Search in Google Scholar

[17] Cao Y, Li Y. Forecasting key spare parts of complex equipments by combining fuzzy neural network and particle swarm optimization. Comput Appl Softw. 2014;31(10):167–71+179.Search in Google Scholar

[18] Zhang H, Nguyen H, Bui XN, Nguyen-Thoi T, Bui TT, Nguyen N, et al. Developing a novel artificial intelligence model to estimate the capital cost of mining projects using deep neural network-based ant colony optimization algorithm. Resour Policy. 2020;66:1–16.10.1016/j.resourpol.2020.101604Search in Google Scholar

[19] Sepp H, Jürgen S. Long short-term memory. Neural Comput. 1997;9(8):1735–80.10.1162/neco.1997.9.8.1735Search in Google Scholar PubMed

[20] Jafarian A, Measoomy Nia S, Khalili Golmankhaneh A, Baleanu D. On artificial neural networks approach with new cost functions. Appl Math Comput. 2018;339:546–55.10.1016/j.amc.2018.07.053Search in Google Scholar

[21] Kim YG, Park ES, Kim BC, Lee SH, Lee SH. Prediction of the major factors for the analysis of the erosion effect on atomic oxygen in LEO satellite using a machine learning method (LSTM). J Aerosp Syst Eng. 2020;14(2):50–6.Search in Google Scholar

[22] Wang X, Wu J, Liu C, Yang H, Du Yanli NI. Exploring LSTM-based recurrent neural network for failures time series prediction. J Beijing Univ Aeronaut Astronaut. 2018;44(4):772–84.Search in Google Scholar

[23] Upadhyay C. Construction of adaptive pulse coupled neural network for abnormality detection in medical images. Appl Artif Intell. 2018;32(6):477–95.10.1080/08839514.2018.1481818Search in Google Scholar

© 2021 Weixing Song et al., published by De Gruyter

This work is licensed under the Creative Commons Attribution 4.0 International License.

Articles in the same Issue

- Regular Articles

- Circular Rydberg states of helium atoms or helium-like ions in a high-frequency laser field

- Closed-form solutions and conservation laws of a generalized Hirota–Satsuma coupled KdV system of fluid mechanics

- W-Chirped optical solitons and modulation instability analysis of Chen–Lee–Liu equation in optical monomode fibres

- The problem of a hydrogen atom in a cavity: Oscillator representation solution versus analytic solution

- An analytical model for the Maxwell radiation field in an axially symmetric galaxy

- Utilization of updated version of heat flux model for the radiative flow of a non-Newtonian material under Joule heating: OHAM application

- Verification of the accommodative responses in viewing an on-axis analog reflection hologram

- Irreversibility as thermodynamic time

- A self-adaptive prescription dose optimization algorithm for radiotherapy

- Algebraic computational methods for solving three nonlinear vital models fractional in mathematical physics

- The diffusion mechanism of the application of intelligent manufacturing in SMEs model based on cellular automata

- Numerical analysis of free convection from a spinning cone with variable wall temperature and pressure work effect using MD-BSQLM

- Numerical simulation of hydrodynamic oscillation of side-by-side double-floating-system with a narrow gap in waves

- Closed-form solutions for the Schrödinger wave equation with non-solvable potentials: A perturbation approach

- Study of dynamic pressure on the packer for deep-water perforation

- Ultrafast dephasing in hydrogen-bonded pyridine–water mixtures

- Crystallization law of karst water in tunnel drainage system based on DBL theory

- Position-dependent finite symmetric mass harmonic like oscillator: Classical and quantum mechanical study

- Application of Fibonacci heap to fast marching method

- An analytical investigation of the mixed convective Casson fluid flow past a yawed cylinder with heat transfer analysis

- Considering the effect of optical attenuation on photon-enhanced thermionic emission converter of the practical structure

- Fractal calculation method of friction parameters: Surface morphology and load of galvanized sheet

- Charge identification of fragments with the emulsion spectrometer of the FOOT experiment

- Quantization of fractional harmonic oscillator using creation and annihilation operators

- Scaling law for velocity of domino toppling motion in curved paths

- Frequency synchronization detection method based on adaptive frequency standard tracking

- Application of common reflection surface (CRS) to velocity variation with azimuth (VVAz) inversion of the relatively narrow azimuth 3D seismic land data

- Study on the adaptability of binary flooding in a certain oil field

- CompVision: An open-source five-compartmental software for biokinetic simulations

- An electrically switchable wideband metamaterial absorber based on graphene at P band

- Effect of annealing temperature on the interface state density of n-ZnO nanorod/p-Si heterojunction diodes

- A facile fabrication of superhydrophobic and superoleophilic adsorption material 5A zeolite for oil–water separation with potential use in floating oil

- Shannon entropy for Feinberg–Horodecki equation and thermal properties of improved Wei potential model

- Hopf bifurcation analysis for liquid-filled Gyrostat chaotic system and design of a novel technique to control slosh in spacecrafts

- Optical properties of two-dimensional two-electron quantum dot in parabolic confinement

- Optical solitons via the collective variable method for the classical and perturbed Chen–Lee–Liu equations

- Stratified heat transfer of magneto-tangent hyperbolic bio-nanofluid flow with gyrotactic microorganisms: Keller-Box solution technique

- Analysis of the structure and properties of triangular composite light-screen targets

- Magnetic charged particles of optical spherical antiferromagnetic model with fractional system

- Study on acoustic radiation response characteristics of sound barriers

- The tribological properties of single-layer hybrid PTFE/Nomex fabric/phenolic resin composites underwater

- Research on maintenance spare parts requirement prediction based on LSTM recurrent neural network

- Quantum computing simulation of the hydrogen molecular ground-state energies with limited resources

- A DFT study on the molecular properties of synthetic ester under the electric field

- Construction of abundant novel analytical solutions of the space–time fractional nonlinear generalized equal width model via Riemann–Liouville derivative with application of mathematical methods

- Some common and dynamic properties of logarithmic Pareto distribution with applications

- Soliton structures in optical fiber communications with Kundu–Mukherjee–Naskar model

- Fractional modeling of COVID-19 epidemic model with harmonic mean type incidence rate

- Liquid metal-based metamaterial with high-temperature sensitivity: Design and computational study

- Biosynthesis and characterization of Saudi propolis-mediated silver nanoparticles and their biological properties

- New trigonometric B-spline approximation for numerical investigation of the regularized long-wave equation

- Modal characteristics of harmonic gear transmission flexspline based on orthogonal design method

- Revisiting the Reynolds-averaged Navier–Stokes equations

- Time-periodic pulse electroosmotic flow of Jeffreys fluids through a microannulus

- Exact wave solutions of the nonlinear Rosenau equation using an analytical method

- Computational examination of Jeffrey nanofluid through a stretchable surface employing Tiwari and Das model

- Numerical analysis of a single-mode microring resonator on a YAG-on-insulator

- Review Articles

- Double-layer coating using MHD flow of third-grade fluid with Hall current and heat source/sink

- Analysis of aeromagnetic filtering techniques in locating the primary target in sedimentary terrain: A review

- Rapid Communications

- Nonlinear fitting of multi-compartmental data using Hooke and Jeeves direct search method

- Effect of buried depth on thermal performance of a vertical U-tube underground heat exchanger

- Knocking characteristics of a high pressure direct injection natural gas engine operating in stratified combustion mode

- What dominates heat transfer performance of a double-pipe heat exchanger

- Special Issue on Future challenges of advanced computational modeling on nonlinear physical phenomena - Part II

- Lump, lump-one stripe, multiwave and breather solutions for the Hunter–Saxton equation

- New quantum integral inequalities for some new classes of generalized ψ-convex functions and their scope in physical systems

- Computational fluid dynamic simulations and heat transfer characteristic comparisons of various arc-baffled channels

- Gaussian radial basis functions method for linear and nonlinear convection–diffusion models in physical phenomena

- Investigation of interactional phenomena and multi wave solutions of the quantum hydrodynamic Zakharov–Kuznetsov model

- On the optical solutions to nonlinear Schrödinger equation with second-order spatiotemporal dispersion

- Analysis of couple stress fluid flow with variable viscosity using two homotopy-based methods

- Quantum estimates in two variable forms for Simpson-type inequalities considering generalized Ψ-convex functions with applications

- Series solution to fractional contact problem using Caputo’s derivative

- Solitary wave solutions of the ionic currents along microtubule dynamical equations via analytical mathematical method

- Thermo-viscoelastic orthotropic constraint cylindrical cavity with variable thermal properties heated by laser pulse via the MGT thermoelasticity model

- Theoretical and experimental clues to a flux of Doppler transformation energies during processes with energy conservation

- On solitons: Propagation of shallow water waves for the fifth-order KdV hierarchy integrable equation

- Special Issue on Transport phenomena and thermal analysis in micro/nano-scale structure surfaces - Part II

- Numerical study on heat transfer and flow characteristics of nanofluids in a circular tube with trapezoid ribs

- Experimental and numerical study of heat transfer and flow characteristics with different placement of the multi-deck display cabinet in supermarket

- Thermal-hydraulic performance prediction of two new heat exchangers using RBF based on different DOE

- Diesel engine waste heat recovery system comprehensive optimization based on system and heat exchanger simulation

- Load forecasting of refrigerated display cabinet based on CEEMD–IPSO–LSTM combined model

- Investigation on subcooled flow boiling heat transfer characteristics in ICE-like conditions

- Research on materials of solar selective absorption coating based on the first principle

- Experimental study on enhancement characteristics of steam/nitrogen condensation inside horizontal multi-start helical channels

- Special Issue on Novel Numerical and Analytical Techniques for Fractional Nonlinear Schrodinger Type - Part I

- Numerical exploration of thin film flow of MHD pseudo-plastic fluid in fractional space: Utilization of fractional calculus approach

- A Haar wavelet-based scheme for finding the control parameter in nonlinear inverse heat conduction equation

- Stable novel and accurate solitary wave solutions of an integrable equation: Qiao model

- Novel soliton solutions to the Atangana–Baleanu fractional system of equations for the ISALWs

- On the oscillation of nonlinear delay differential equations and their applications

- Abundant stable novel solutions of fractional-order epidemic model along with saturated treatment and disease transmission

- Fully Legendre spectral collocation technique for stochastic heat equations

- Special Issue on 5th International Conference on Mechanics, Mathematics and Applied Physics (2021)

- Residual service life of erbium-modified AM50 magnesium alloy under corrosion and stress environment

- Special Issue on Advanced Topics on the Modelling and Assessment of Complicated Physical Phenomena - Part I

- Diverse wave propagation in shallow water waves with the Kadomtsev–Petviashvili–Benjamin–Bona–Mahony and Benney–Luke integrable models

- Intensification of thermal stratification on dissipative chemically heating fluid with cross-diffusion and magnetic field over a wedge

Articles in the same Issue

- Regular Articles

- Circular Rydberg states of helium atoms or helium-like ions in a high-frequency laser field

- Closed-form solutions and conservation laws of a generalized Hirota–Satsuma coupled KdV system of fluid mechanics

- W-Chirped optical solitons and modulation instability analysis of Chen–Lee–Liu equation in optical monomode fibres

- The problem of a hydrogen atom in a cavity: Oscillator representation solution versus analytic solution

- An analytical model for the Maxwell radiation field in an axially symmetric galaxy

- Utilization of updated version of heat flux model for the radiative flow of a non-Newtonian material under Joule heating: OHAM application

- Verification of the accommodative responses in viewing an on-axis analog reflection hologram

- Irreversibility as thermodynamic time

- A self-adaptive prescription dose optimization algorithm for radiotherapy

- Algebraic computational methods for solving three nonlinear vital models fractional in mathematical physics

- The diffusion mechanism of the application of intelligent manufacturing in SMEs model based on cellular automata

- Numerical analysis of free convection from a spinning cone with variable wall temperature and pressure work effect using MD-BSQLM

- Numerical simulation of hydrodynamic oscillation of side-by-side double-floating-system with a narrow gap in waves

- Closed-form solutions for the Schrödinger wave equation with non-solvable potentials: A perturbation approach

- Study of dynamic pressure on the packer for deep-water perforation

- Ultrafast dephasing in hydrogen-bonded pyridine–water mixtures

- Crystallization law of karst water in tunnel drainage system based on DBL theory

- Position-dependent finite symmetric mass harmonic like oscillator: Classical and quantum mechanical study

- Application of Fibonacci heap to fast marching method

- An analytical investigation of the mixed convective Casson fluid flow past a yawed cylinder with heat transfer analysis

- Considering the effect of optical attenuation on photon-enhanced thermionic emission converter of the practical structure

- Fractal calculation method of friction parameters: Surface morphology and load of galvanized sheet

- Charge identification of fragments with the emulsion spectrometer of the FOOT experiment

- Quantization of fractional harmonic oscillator using creation and annihilation operators

- Scaling law for velocity of domino toppling motion in curved paths

- Frequency synchronization detection method based on adaptive frequency standard tracking

- Application of common reflection surface (CRS) to velocity variation with azimuth (VVAz) inversion of the relatively narrow azimuth 3D seismic land data

- Study on the adaptability of binary flooding in a certain oil field

- CompVision: An open-source five-compartmental software for biokinetic simulations

- An electrically switchable wideband metamaterial absorber based on graphene at P band

- Effect of annealing temperature on the interface state density of n-ZnO nanorod/p-Si heterojunction diodes

- A facile fabrication of superhydrophobic and superoleophilic adsorption material 5A zeolite for oil–water separation with potential use in floating oil

- Shannon entropy for Feinberg–Horodecki equation and thermal properties of improved Wei potential model

- Hopf bifurcation analysis for liquid-filled Gyrostat chaotic system and design of a novel technique to control slosh in spacecrafts

- Optical properties of two-dimensional two-electron quantum dot in parabolic confinement

- Optical solitons via the collective variable method for the classical and perturbed Chen–Lee–Liu equations

- Stratified heat transfer of magneto-tangent hyperbolic bio-nanofluid flow with gyrotactic microorganisms: Keller-Box solution technique

- Analysis of the structure and properties of triangular composite light-screen targets

- Magnetic charged particles of optical spherical antiferromagnetic model with fractional system

- Study on acoustic radiation response characteristics of sound barriers

- The tribological properties of single-layer hybrid PTFE/Nomex fabric/phenolic resin composites underwater

- Research on maintenance spare parts requirement prediction based on LSTM recurrent neural network

- Quantum computing simulation of the hydrogen molecular ground-state energies with limited resources

- A DFT study on the molecular properties of synthetic ester under the electric field

- Construction of abundant novel analytical solutions of the space–time fractional nonlinear generalized equal width model via Riemann–Liouville derivative with application of mathematical methods

- Some common and dynamic properties of logarithmic Pareto distribution with applications

- Soliton structures in optical fiber communications with Kundu–Mukherjee–Naskar model

- Fractional modeling of COVID-19 epidemic model with harmonic mean type incidence rate

- Liquid metal-based metamaterial with high-temperature sensitivity: Design and computational study

- Biosynthesis and characterization of Saudi propolis-mediated silver nanoparticles and their biological properties

- New trigonometric B-spline approximation for numerical investigation of the regularized long-wave equation

- Modal characteristics of harmonic gear transmission flexspline based on orthogonal design method

- Revisiting the Reynolds-averaged Navier–Stokes equations

- Time-periodic pulse electroosmotic flow of Jeffreys fluids through a microannulus

- Exact wave solutions of the nonlinear Rosenau equation using an analytical method

- Computational examination of Jeffrey nanofluid through a stretchable surface employing Tiwari and Das model

- Numerical analysis of a single-mode microring resonator on a YAG-on-insulator

- Review Articles

- Double-layer coating using MHD flow of third-grade fluid with Hall current and heat source/sink

- Analysis of aeromagnetic filtering techniques in locating the primary target in sedimentary terrain: A review

- Rapid Communications

- Nonlinear fitting of multi-compartmental data using Hooke and Jeeves direct search method

- Effect of buried depth on thermal performance of a vertical U-tube underground heat exchanger

- Knocking characteristics of a high pressure direct injection natural gas engine operating in stratified combustion mode

- What dominates heat transfer performance of a double-pipe heat exchanger

- Special Issue on Future challenges of advanced computational modeling on nonlinear physical phenomena - Part II

- Lump, lump-one stripe, multiwave and breather solutions for the Hunter–Saxton equation

- New quantum integral inequalities for some new classes of generalized ψ-convex functions and their scope in physical systems

- Computational fluid dynamic simulations and heat transfer characteristic comparisons of various arc-baffled channels

- Gaussian radial basis functions method for linear and nonlinear convection–diffusion models in physical phenomena

- Investigation of interactional phenomena and multi wave solutions of the quantum hydrodynamic Zakharov–Kuznetsov model

- On the optical solutions to nonlinear Schrödinger equation with second-order spatiotemporal dispersion

- Analysis of couple stress fluid flow with variable viscosity using two homotopy-based methods

- Quantum estimates in two variable forms for Simpson-type inequalities considering generalized Ψ-convex functions with applications

- Series solution to fractional contact problem using Caputo’s derivative

- Solitary wave solutions of the ionic currents along microtubule dynamical equations via analytical mathematical method

- Thermo-viscoelastic orthotropic constraint cylindrical cavity with variable thermal properties heated by laser pulse via the MGT thermoelasticity model

- Theoretical and experimental clues to a flux of Doppler transformation energies during processes with energy conservation

- On solitons: Propagation of shallow water waves for the fifth-order KdV hierarchy integrable equation

- Special Issue on Transport phenomena and thermal analysis in micro/nano-scale structure surfaces - Part II

- Numerical study on heat transfer and flow characteristics of nanofluids in a circular tube with trapezoid ribs

- Experimental and numerical study of heat transfer and flow characteristics with different placement of the multi-deck display cabinet in supermarket

- Thermal-hydraulic performance prediction of two new heat exchangers using RBF based on different DOE

- Diesel engine waste heat recovery system comprehensive optimization based on system and heat exchanger simulation

- Load forecasting of refrigerated display cabinet based on CEEMD–IPSO–LSTM combined model

- Investigation on subcooled flow boiling heat transfer characteristics in ICE-like conditions

- Research on materials of solar selective absorption coating based on the first principle

- Experimental study on enhancement characteristics of steam/nitrogen condensation inside horizontal multi-start helical channels

- Special Issue on Novel Numerical and Analytical Techniques for Fractional Nonlinear Schrodinger Type - Part I

- Numerical exploration of thin film flow of MHD pseudo-plastic fluid in fractional space: Utilization of fractional calculus approach

- A Haar wavelet-based scheme for finding the control parameter in nonlinear inverse heat conduction equation

- Stable novel and accurate solitary wave solutions of an integrable equation: Qiao model

- Novel soliton solutions to the Atangana–Baleanu fractional system of equations for the ISALWs

- On the oscillation of nonlinear delay differential equations and their applications

- Abundant stable novel solutions of fractional-order epidemic model along with saturated treatment and disease transmission

- Fully Legendre spectral collocation technique for stochastic heat equations

- Special Issue on 5th International Conference on Mechanics, Mathematics and Applied Physics (2021)

- Residual service life of erbium-modified AM50 magnesium alloy under corrosion and stress environment

- Special Issue on Advanced Topics on the Modelling and Assessment of Complicated Physical Phenomena - Part I

- Diverse wave propagation in shallow water waves with the Kadomtsev–Petviashvili–Benjamin–Bona–Mahony and Benney–Luke integrable models

- Intensification of thermal stratification on dissipative chemically heating fluid with cross-diffusion and magnetic field over a wedge