Abstract

While Wikipedia is a widely used source for medical and health information, few studies have examined disparities in perceived information quality based on gender, race, and age. This study investigates how Wikipedia users evaluate the quality of health-related content and whether perceptions differ across these sociodemographic groups. A total of 321 adult Wikipedia readers and contributors participated in a survey assessing five dimensions of information quality: presentation, trustworthiness, reliability, currency, and topic coverage. Descriptive statistics and multivariate analysis of variance (MANOVA) were used to evaluate perceived quality and identify group differences. Overall, both genders viewed the quality of Wikipedia’s health information positively. However, statistically significant gender differences were identified across the five quality factors, with males reporting higher ratings than females. Significant differences between White and non-White participants were also found in four of the five dimensions, excluding currency, with non-White participants reporting lower perceptions of quality. No significant age-based differences were identified. These findings contribute to a more nuanced understanding of how different demographic groups assess health content on Wikipedia. By highlighting disparities in perceptions, this study provides valuable insights for improving Wikipedia’s inclusivity and trustworthiness in health communication.

1 Introduction

Wikipedia, the peer produced online encyclopedia, is a critical information resource for health information (Smith 2023; Huisman et al. 2021). During the early stages of the novel coronavirus pandemic, for example, Wikipedia saw rapid expansion in COVID-related content with over 5,000 new Wikipedia pages created over several months. This newly generated health information was quickly consumed, resulting in over 400 million-page views by mid-June 2020 (Colavizza 2020, 1349). Wikipedia, as the online encyclopedia, has become a major information source for general information and its prominence in consumer health information seeking is no exception. It is the ninth-most visited website in Canada and the United States as of March 2025 (Similarweb 2025a, 2025b). Wikipedia’s impact extends well beyond direct information searching on the platform. The online encyclopedia is the second top data source for ChatGPT (Schaul et al. 2023) and a popular data source for virtual assistants Alexa and Google Assistant. Therefore, Wikipedia plays a pivotal role in undergirding modern society’s information creation and information seeking infrastructure.

While many individuals turn to Wikipedia for their health and or medical information, what remains unclear is how users across different sociodemographic groups such as age, gender, and race perceive the quality of health-related content on Wikipedia. This is an important but often overlooked aspect of health information seeking. Researchers have explored gender bias in the Wikipedia universe with respect to its editors and the subsequent content that is created (Tripodi 2023; Lir 2021; Langrock and González-Bailón 2022). However, there has been far less focus on gender and health information quality. Other studies have centered on the lack of racial diversity among editors (Falk et al. 2023; Lemieux et al. 2023) and how the structure of Wikipedia decenters minoritized communities (Mandiberg 2023). However, research on editors and readers from non-dominant communities and their information behaviors within Wikipedia remains sparse. A few studies have focused on Black Wikipedians’ motivations and perceptions of information bias, but they have not explored health information aspects (Stewart and Ju 2020; Ju and Stewart 2019). Similarly, few studies have focused on age as a factor in health information quality on Wikipedia (Huisman et al. 2021). Therefore, there is a need to better understand how a diverse set of users across age, gender, and race perceive the quality of health information on Wikipedia.

2 Literature Review

2.1 Perceived Quality of Health Information and Health Contents on Wikipedia

As online information proliferates, the perceived quality of the information has become a crucial research topic among information science researchers. Since the introduction of Wang and Strong’s (1996) data quality framework, it has been widely employed in a variety of studies focusing on information quality in both traditional settings and online information environments. Wang and Strong’s conceptual framework consists of four categories: Intrinsic, Contextual, Representational, and Accessibility Information Quality. Intrinsic information quality refers to essential characteristics of information with the dimensions of accuracy (Rieh and Belkin 1998; Katerattanakul and Siau 2008; Ju and Stewart 2024) and reliability such as the functionality of hyperlinks on Web documents. Contextual information quality pertains to how well the information fits the specific task or product context. For instance, the quality of health information provided by the National Cancer Institute (Koo et al. 2011) or e-commerce information found online (Lee et al. 2007) has been assessed as part of this category. Representational information quality refers to the layout and organization of information as perceived visually by users. The information should be interpretable, concise, and consistent (Rogova and Bosse 2010), while accessibility quality involves helping users navigate or search for the information they need (Lee et al. 2007).

While Wang and Strong’s (1996) original work identified specific dimensions within each of the four categories, subsequent studies have modified and expanded this theoretical framework by developing additional dimensions tailored to specific contexts for measuring information quality. Similar categories, such as accuracy, completeness, readability, design, disclosures, and references, have been frequently cited in evaluations of health information (Eysenbach et al. 2002). Stvilia et al. (2009) also proposed five constructs (i.e., accuracy, completeness, authority, usefulness, and accessibility) for developing an information quality model in the context of healthcare provider websites. More recently, Ju and Stewart (2024) revisited Wang and Strong’s framework with a focus on online information quality. They employed a grounded theory approach to collect empirical data on perceived quality from 197 users of consumer health information. They then conducted content analysis and performed factor analysis to identify twenty-six dimensions of information quality. Subsequently, six of these dimensions (i.e., accuracy, trustworthiness, reliability, currency, topic coverage, and information presentation) were used to examine user satisfaction with consumer health information on Wikipedia (Ju et al. 2024).

Given the vast number of articles and the wide range of topics covered on Wikipedia, assessing the information quality is challenging, particularly because the content is user-generated. Although Wikipedia has become one of the most frequently accessed online information sources, with a comparable number of errors to those found in Encyclopedia Britannica (Giles 2005; Smith 2020), prior studies have offered conflicting evidence regarding the overall quality of Wikipedia content, which often varies by subject domain. Wikipedia’s health-related articles are among the most widely used sources of health information. They are appreciated for their broad topic coverage, extensive references, and collaborative updates (Shafee et al. 2017; Heilman et al. 2011). However, these articles have also been criticized for low readability, omission errors, and uneven topic representation (Mesgari et al. 2015). As more individuals turn to online sources for health information instead of traditional consultations with physicians or healthcare providers (Renahy et al. 2010), ensuring the quality of these digital resources has become increasingly important.

2.2 Online Health Information Seeking

Technology has become part of our lifestyle (Lewis 2006). The internet has also become a repository of or a major source for health information (Jia et al. 2021) that empowers individuals to better understand their health concerns, make medical decisions, and manage different health conditions (Di Novi et al. 2024; Li et al. 2025). According to the 2024 Health Information National Trends Survey (HINTS), nearly 83 % of U.S. adults reported seeking health information online (National Cancer Institute 2025). In this context, our study is grounded in the broader academic discourse on online health information seeking (OHIS), which examines the phenomenon that people, motivated by the need for medical or health information, actively search and obtain the desired information using online and social media platforms (Bratland et al. 2024; Lewis et al. 2021). This line of research contains voluminous rhetoric concerning online health information seeking behavior (OHISB), factors affecting OHISB, and the impact of OHIS on other health-related activities, such as clinic visits.

Key findings of several previous works related to the current study are synthesized as follows. First, OHISB refers to users’ deliberate attempts to stay informed or to meet a medical or health information need by utilizing the internet (Bratland et al. 2024; Di Novi et al. 2024; Johnson 1997). The term OHISB is often used interchangeably with OHIS and e-HISB. A recent study by Smith (2023) analyzing twenty-one user experiences with health information on Wikipedia is particularly relevant to our current study. The findings indicate that Wikipedia’s health content offers several advantages, such as convenience, accessibility, and support for personal autonomy. However, whether users choose to trust the content depends on specific situations and their individual experiences. Second, OHIS has the following four characteristics: (1) There is more abundant health information available on the internet than ever before; (2) OHISB is popular due to the affordability, easy accessibility, convenience, and anonymity of online resources; (3) successful OHISB enables individuals to be better informed and may enhance communication with healthcare service providers; and (4) unsuccessful OHISB may lead to confusion, misinterpretation, and even cyberchondria, which can heighten fear, anxiety, and mistrust of medical authority (Di Novi et al. 2024; Starcevic 2024).

Third, OHISB can be influenced by numerous sociodemographic factors, such as age, gender, race/ethnicity, income, employment status, educational level, places of residence (e.g., rural or urban), and health status/condition (Bratland et al. 2024; Di Novi et al. 2024; Finney Rutten et al. 2019; Jia et al. 2021; Li et al. 2025; Liu et al. 2024; Ma et al. 2023; Miller and Bell 2012; Starcevic 2024). Fourth, when conducting OHISB, people may have concerns about the quality, credibility, and trustworthiness of health information on the internet (Di Novi et al. 2024; Jia et al. 2021; Liu et al. 2024; Ma et al. 2023; Miller and Bell 2012; Starcevic 2024; Wang et al. 2021). To address these concerns, individuals can improve their eHealth literacy (i.e., the ability to assess and understand online health information) or gain professional and social support from online communities (Jia et al. 2021; Ma et al. 2023).

2.3 Wikipedia Studies on Demographic Groups

Previous studies have examined gender imbalances in various online information environments. Glott et al. (2010) found that only 13 % of content contributors (editors) on Wikipedia are female, yet female readership accounts for 25 %. Lam et al. (2011) examined gender imbalances in the English edition of Wikipedia and discovered a significant gap in the number of content contributors as well as other differences. They found that Wikipedia is less successful in converting female readers to content contributors than converting males. This gap has remained over time. There has been a substantial gender gap in Wikipedia participation: while women made up about 47 % of readers around 2009, only about 16 % of new editors identified as female, a proportion that has shown little to no change through 2011. In terms of content, Farič et al. (2024) recently found that of the 1,000 most popular English-language Wikipedia health articles sampled, an overwhelming majority (93.3 %) were not sex-specific (i.e., targeted to a certain gender), with only a small percentage (6.7 %) being sex-specific. In addition, there were more articles about females than articles about males. Female-category articles were also perceived as more important but had a lower number of edits than male-category articles.

Research has also shown differences among Black editors in their perceptions and motivations to contribute to Wikipedia content. Ju and Stewart (2019) found that Black male editors are more motivated to contribute than Black female editors. Additionally, male college students use Wikipedia more frequently than females, and perceive a lower level of risk associated with the platform (Lim and Kwon 2010). Male students also reported more positive perceptions of Wikipedia’s content and its quality of information compared to female students. The research has also revealed gender disparities among other social platforms. Kircaburun (2016) found that Twitter addiction levels of undergraduate male college students in Turkey were significantly higher than that of females. They also showed that gender was a positive predictor of Twitter addiction while agreeableness, conscientiousness, and extraversion were negative predictors. Holmberg and Hellsten (2015) also observed gender differences during a climate change debate over Twitter. They found that female users on Twitter tended to show more interest and belief in the anthropogenic impact on climate change and towards campaigns and organizations involved in action. In contrast, male users were more concerned with the politics related to the climate change debate. Other studies have explored gender gaps related to online interaction behaviors (Küchler et al. 2023), Korean online news commentaries (Baek et al. 2021), and social media adoption (Nesi and Prinstein 2015).

In terms of race, few studies have examined people of color and their interactions with Wikipedia. Research by Stewart and Ju (2020) and Ju and Stewart (2019) focused on Black Wikipedians’ motivations for contributing content and the community’s perceptions of information bias in the online encyclopedia. A growing body of research also focused on biases in Wikipedia with respect to authorship and content representation. Lemieux et al. (2023) recent study of academics’ biographies found that White women and people of color had their pages marked for deletion at a more statistically significant rate compared to White men. In addition, a study by Kim et al. (2021) on the visual representation (photographs) of skin diseases in Wikipedia found an “underrepresentation” of images depicting dermatological conditions of people of color.

Several studies have examined the trust and credibility of the information on Wikipedia based on age differences. Flanagin and Metzger (2011) found that both children (11–18 years) and adults (18+ years) viewed Wikipedia as less credible than Encyclopedia Britannica and Citizendium. Despite children’s developmental and cognitive constraints, they showed some skepticism towards online media. A study in France by Mothe and Sahut (2018) revealed that trust in Wikipedia also varied by education level, with college students showing higher trust for academic tasks than high school and bachelor’s students, while master’s students were more mistrustful. Huisman et al. (2020) also explored why older adults (50+) in Belgium used Wikipedia for health information, noting its convenience, coverage, up-to-date content, and comprehensibility.

The current study aims to explore Wikipedia users’ perceived health information quality and their perceived differences based on gender, age, and race and ethnicity. We focus on health topics in the English Wikipedia, comparing perceptions between two age groups: 18–39 years and 40 years or older. Scholars have noted the disparities in Wikipedia authorship which overwhelmingly skews to male contributors, of European descent, who reside in the global North. This imbalance results in unbalanced and biased content, and underrepresentation of diverse perspectives on certain health topics and reinforcement of gender stereotypes. Therefore, it is crucial that we better understand how categories of differences, with respect to gender, age, and racialized identity, impact users’ notions of the perceived quality of health information on Wikipedia. The current study employed a quantitative survey method to address the following research questions:

RQ1:

What is the overall perceived level of health information quality?

RQ2:

Are there any differences in perceived levels of health information quality by gender, age, and race?

3 Research Methods

This study conducted an online survey to examine the perceptions of information quality of users who read and/or contribute health information on Wikipedia based on age, gender, and racial differences. The survey data underwent analysis through descriptive statistics and multivariate analysis of variance (MANOVA) to explore statistically significant differences in the perceptions of different social groups.

3.1 Study Participants and Recruitment

The study recruited 321 participants who either read or contributed health content to Wikipedia (English Edition) in the past two years. We used Qualtrics Panel Services (https://www.qualtrics.com/research-services/), which is a widely used crowdsourcing platform, to recruit a specifically defined group of research participants who would otherwise not be easily accessible. The efficiency and reliability of a crowdsourcing approach have been addressed in prior research (Gosling and Mason 2015; Weinberg et al. 2014). A purposive sampling method was employed because a specific group of participants was needed for the study. The online survey included questions on user demographics, use of other online health sites, and information quality factors. Participants were asked to rate their perceptions on a 7-point Likert scale ranging from 1 (strongly disagree) to 7 (strongly agree). Of the 321 respondents, 181 participants were female and 140 were male. The age of the participants ranged from 18 to 65 with the following groupings: 18–29 (74 participants; 23.05 %), 30–39 (77; 23.99 %), 40–49 (65; 20.25 %), 50–59 (57; 17.76 %), and over 60 (48; 14.95 %). Race and ethnic backgrounds within the sample included Asian/Pacific Islander/American Indian (25; 7.79 %), Black (non-Hispanic) (44; 13.71 %), Hispanic (41; 12.77 %), White (non-Hispanic) (199; 61.99 %), multiracial (8; 2.49 %), and other (4; 1.25 %).

Prior to participant recruitment, this study complied with all relevant national regulations and institutional policies and received approval from the authors’ Institutional Review Board (IRB). Informed consent was obtained from all individuals who participated in the study.

3.2 Measures

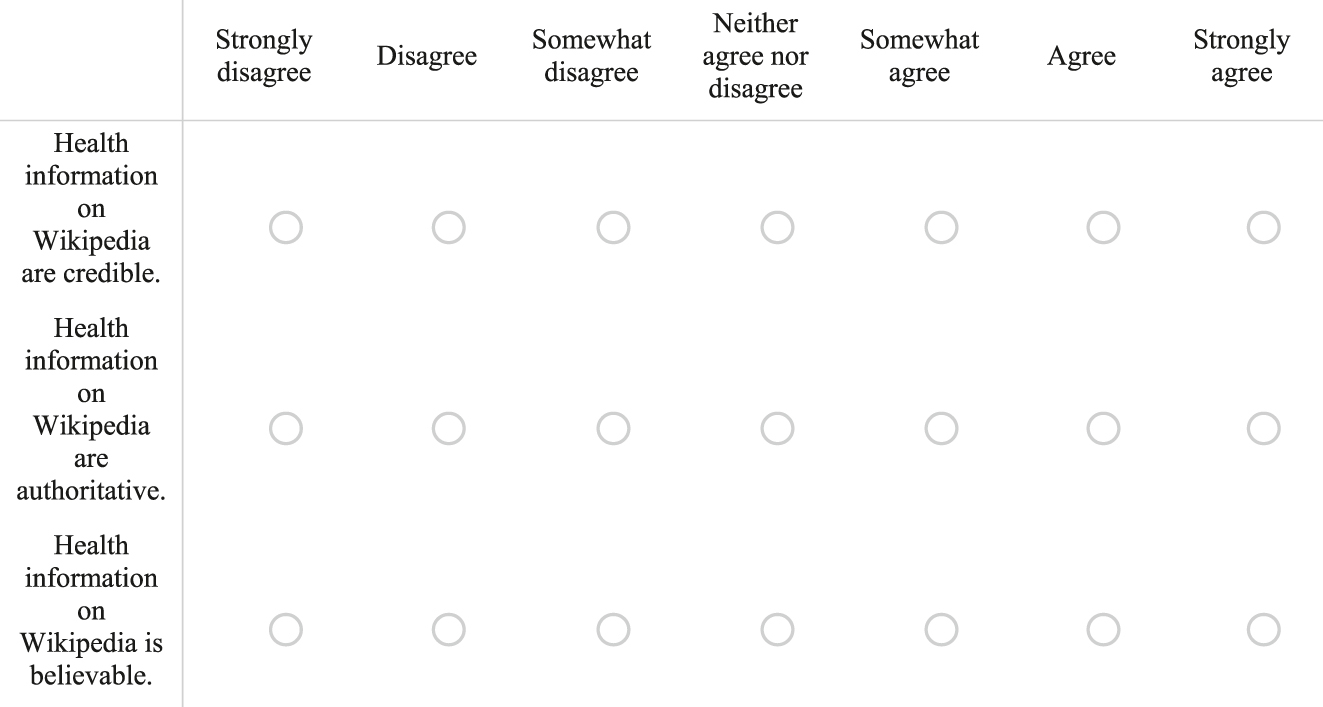

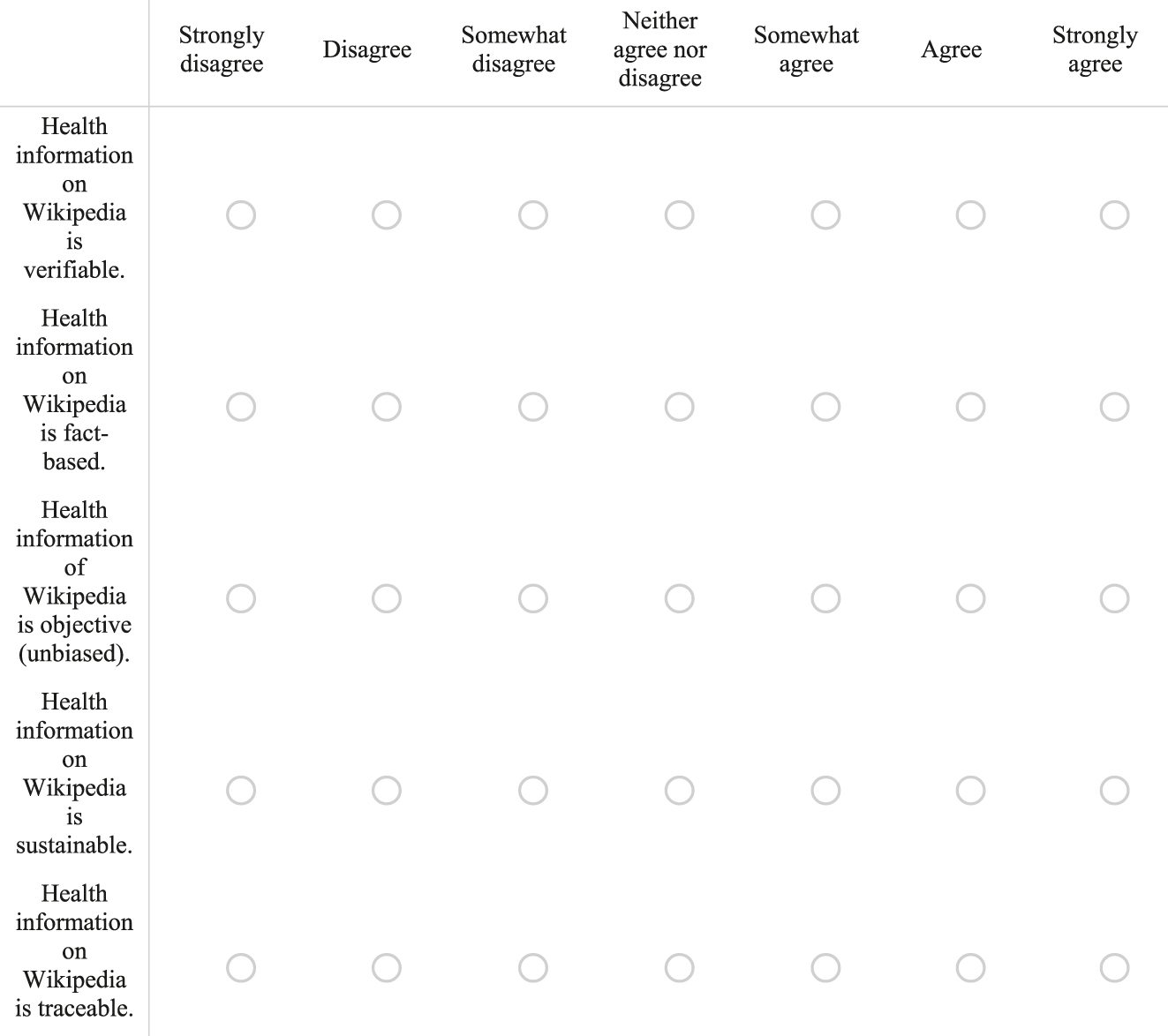

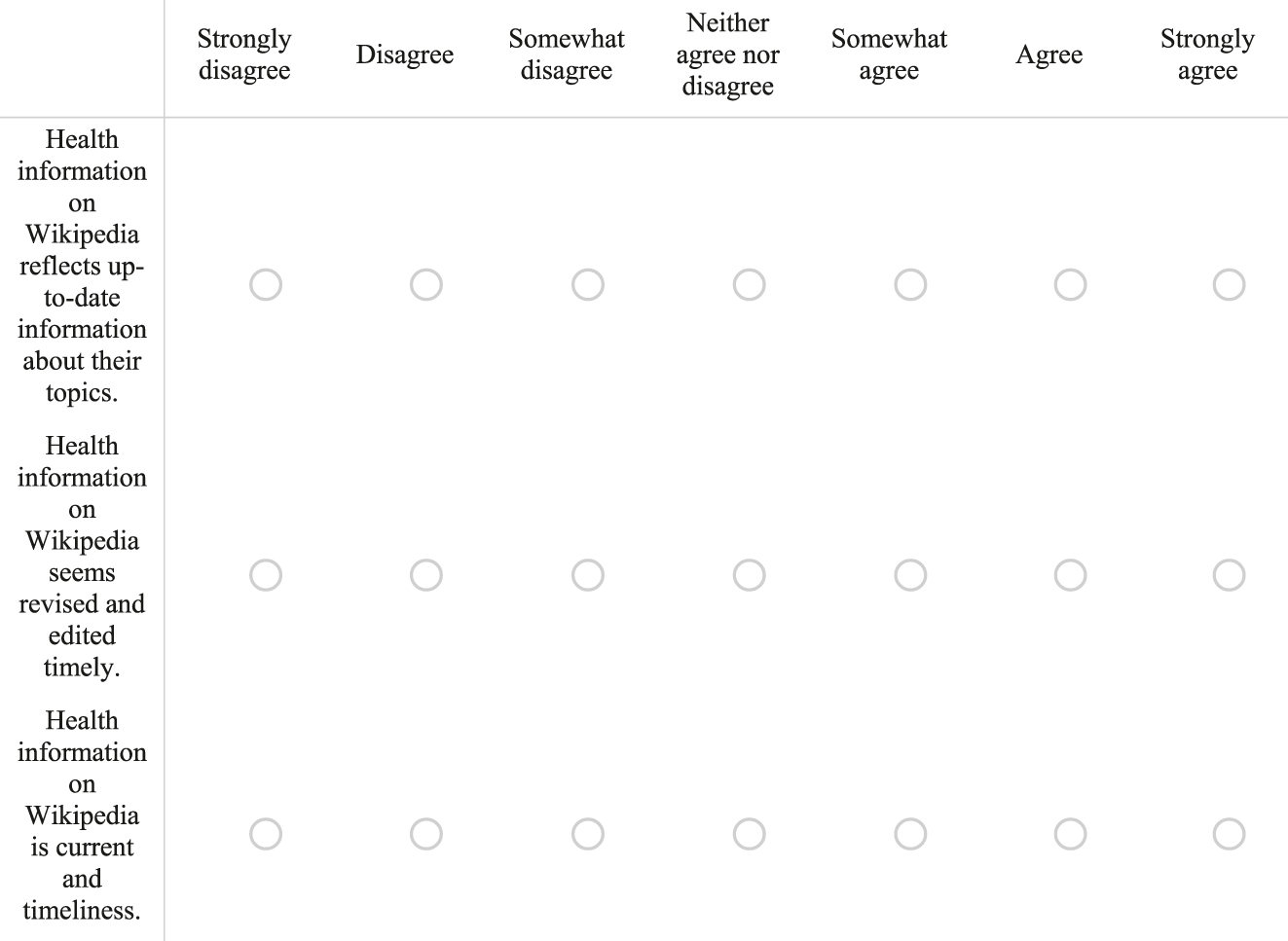

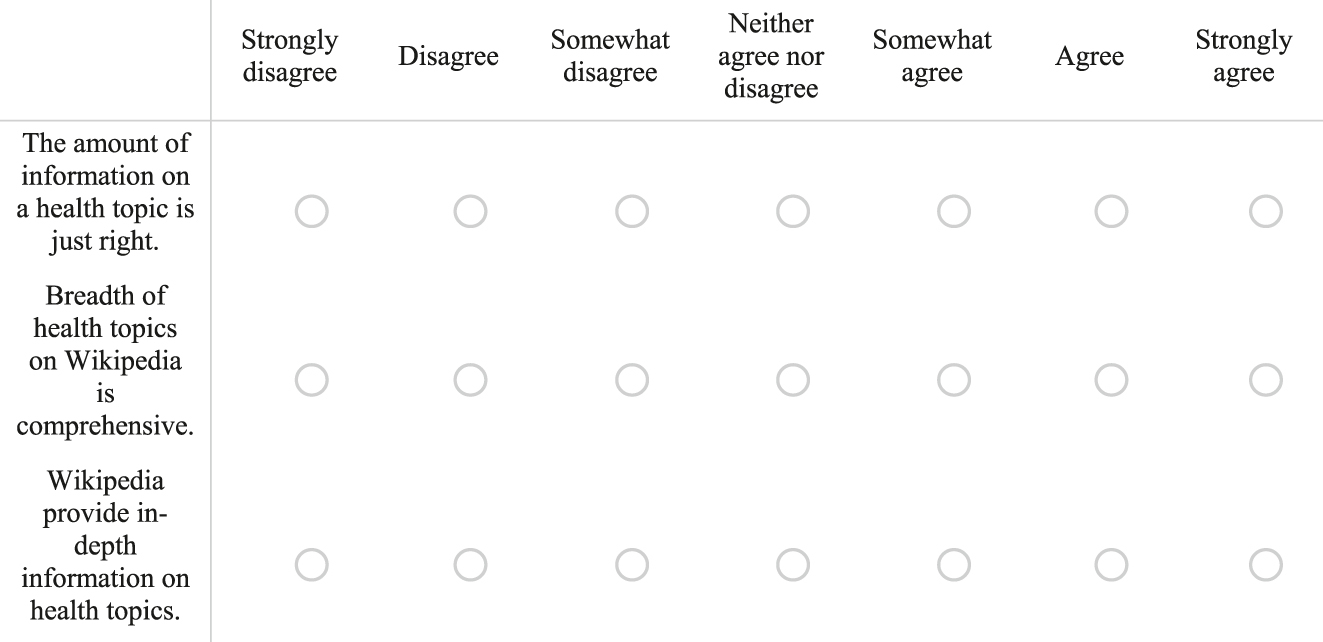

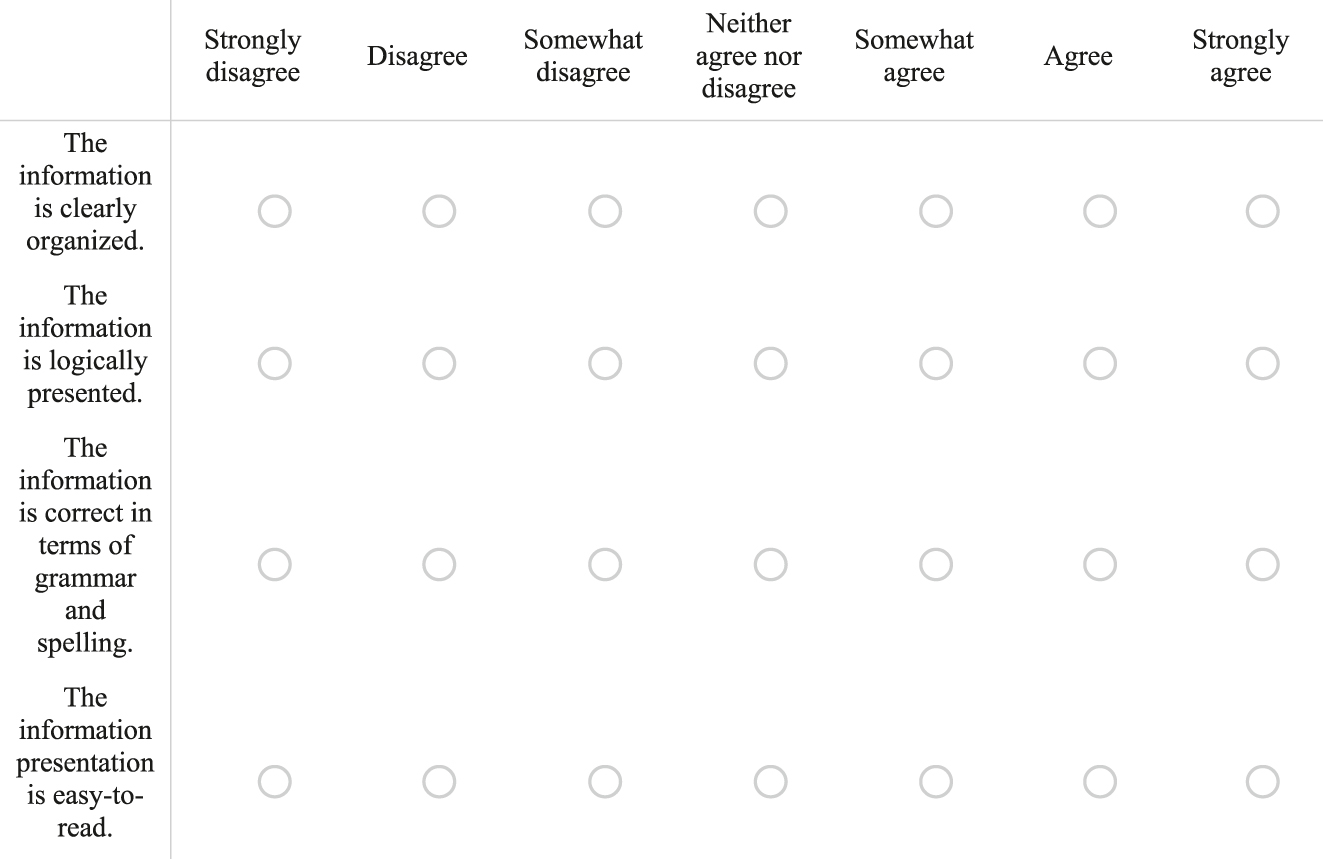

The following five measures for this study were drawn from prior research (Koo et al. 2011; Stvilia et al. 2009; Wang and Strong 1996) and adapted for this study (Figure A1). A total of eighteen items were used in the data collection and analysis, excluding demographic questions. Participants were asked to rate information quality factors on a 7-point Likert scale ranging from 1 (strongly disagree) to 7 (strongly agree). We measured participants’ perceptions of the level of Trustworthiness using three items: “health information on Wikipedia is credible”; “health information on Wikipedia is authoritative”; and “health information on Wikipedia is believable.” Participants’ perceptions of Reliability were assessed by five items: “health information on Wikipedia is verifiable”; “health information on Wikipedia is fact-based”; “health information of Wikipedia is objective (unbiased)”; “health information on Wikipedia is sustainable”; and “health information on Wikipedia is traceable.” We measured the perceived Currency using three items: “health information on Wikipedia reflects up-to-date information about their topics”; “health information on Wikipedia is revised and edited timely”; and “health information on Wikipedia is current and timeliness” (exact terms used in the study). Topic Coverage was measured with three items: “the amount of information on a health topic is just right”; “the breadth of health topics on Wikipedia is comprehensive”; and “Wikipedia provides in-depth information on health topics.” Information Presentation was assessed with four items: “the information is clearly organized”; “the information is logically presented”; “the information is correct in terms of grammar and spelling”; and “the information presentation is easy to read.”

3.3 Data Analysis

The analysis focused on gender, age, and race differences of Wikipedia readers and/or content contributors of health information on Wikipedia. Collected data on perceived information quality was analyzed quantitatively. For RQ1, descriptive statistics were used to describe the baseline levels of the participants’ perceptions of trustworthiness, reliability, currency, topic coverage, and information presentation. For RQ2, a MANOVA was used to test differences in the five perceived information quality factors (dependent variables) according to gender, age, and race (independent variable).

MANOVA serves as an extension of univariate analysis of variance (ANOVA). It is specifically applied when investigating group differences across two or more dependent variables (Stevens 2002). This technique allowed us to test for statistically significant disparities on a composite of these dependent variables. Unlike the alternative of performing multiple individual ANOVAs – a practice known to escalate the probability of a Type I error (i.e., falsely rejecting a true null hypothesis) – MANOVA explicitly accounts for the intercorrelations among the dependent measures. This simultaneous, multivariate approach mitigates Type I error inflation, yielding a more accurate and reliable overall assessment of group-level distinctions (Stevens 2002).

SPSS (version 28) was used to conduct the MANOVA to compare the effects of gender, age, and race on each of the five information quality variables. The age groups were categorized into a younger group (151 participants, 18–39 years) and an older group (171 participants, 40–69 years). The race groups were divided into White participants (199) and non-White participants (122; American Indian, Asian, Black, Hispanic, Multiracial, and Other). For the analysis, age and race groups were collapsed into two broader categories, as the number of participants in each original group was unevenly distributed, which limited the feasibility of making valid comparisons among all categories.

4 Results

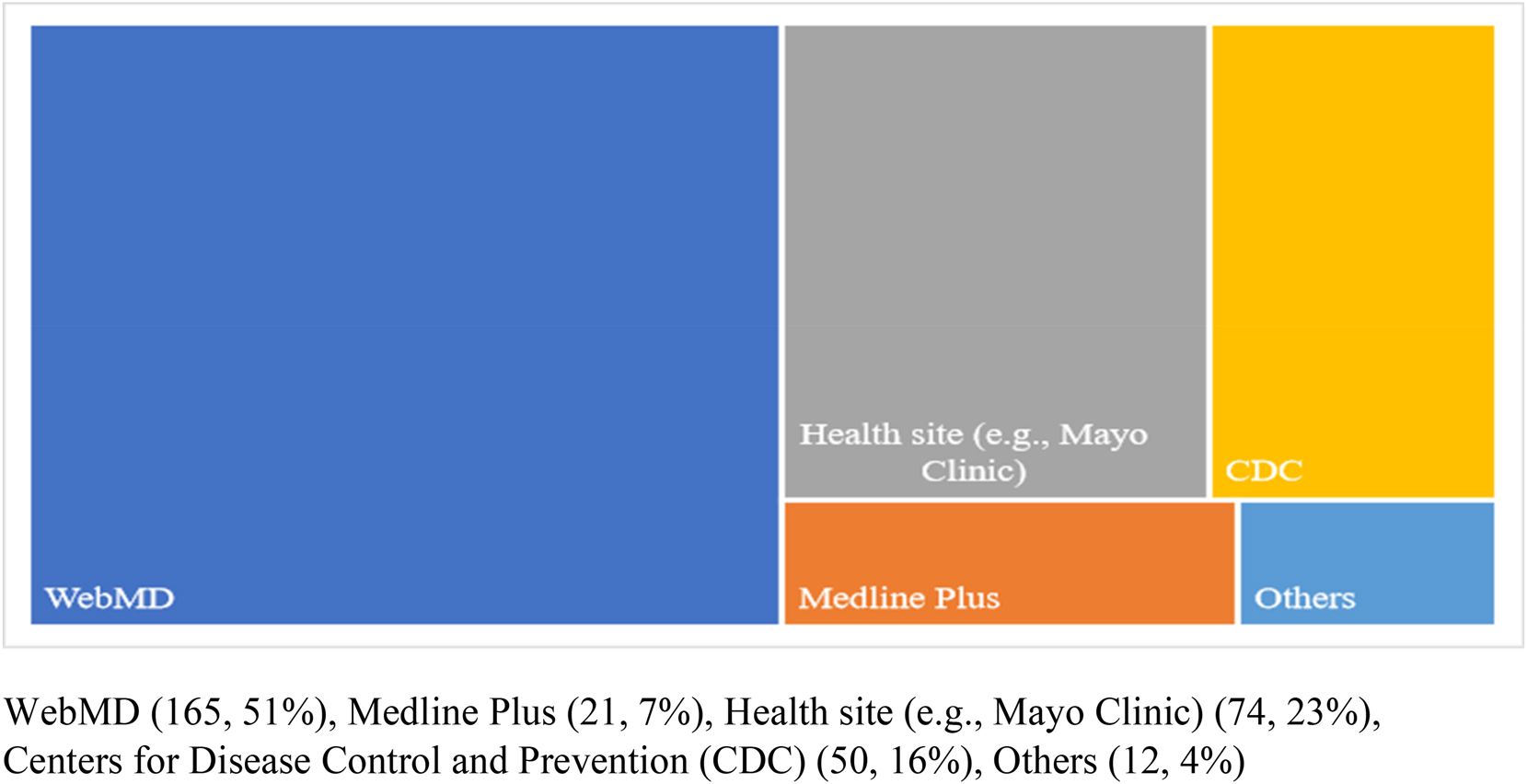

We asked survey respondents which online health information sites, apart from Wikipedia, they used most frequently to fulfill their health information needs. Besides Wikipedia, people mainly sought health information from several prominent online sources. WebMD was visited most often, followed by popular health sites such as Mayo Clinic, the Centers for Disease Control and Prevention (CDC), and Medline Plus, while others found sites through search engines like Google or Bing (Figure 1).

Health information websites visited by respondents to meet their health information needs.

4.1 Descriptive Analysis

Participants’ perceptions of information quality were assessed across five dimensions: trustworthiness, reliability, currency, topic coverage, and information presentation. Descriptive statistics, including means, standard deviations (SD), and correlations, were calculated. The mean scores for these dimensions ranged from 4.838 to 5.43, and the SD ranged from 0.978 to 1.208. The correlations among all variables were statistically significant (p < 0.05). Among the information quality dimensions, the strongest correlation was observed between trustworthiness and reliability (r = 0.816), followed by the correlations between reliability and currency (r = 0.738) and between reliability and topic coverage (r = 0.736).

To assess the assumption of multivariate normality, we examined skewness and kurtosis values for the information quality dimensions (Table 1). The skewness values ranged from –0.597 to 0.597, and kurtosis values ranged from –0.085 to 0.671. Consistent with Kline’s (2011) recommendations (skewness < |3|; kurtosis < |10|), these values indicated that the collected data adequately met the assumption of multivariate normality.

Descriptive statistics, means, standard deviations, and intercorrelations among study variables.

| Variables | 1 | 2 | 3 | 4 | 5 |

|---|---|---|---|---|---|

| 1. Trustworthiness | – | ||||

| 2. Reliability | 0.816* | – | |||

| 3. Currency | 0.725* | 0.738* | – | ||

| 4. Topic coverage | 0.712* | 0.736* | 0.747* | – | |

| 5. Info presentation | 0.671* | 0.661* | 0.690* | 0.702* | – |

| Mean | 4.838 | 4.958 | 5.058 | 5.067 | 5.435 |

| Standard deviation (SD) | 1.208 | 1.094 | 1.053 | 1.121 | 1.071 |

| Skewness | −0.113 | −0.133 | −0.127 | −0.192 | −0.597 |

| Kurtosis | −0.671 | −0.445 | −0.362 | −0.771 | −0.085 |

-

n = 321. *p < 0.05.

4.2 Reliability and Validity

To assess the reliability of each construct, both Cronbach’s alpha and composite reliability (CR) were examined. Cronbach’s alpha coefficients ranged from 0.851 to 0.904, indicating strong internal consistency. CR values ranged from 0.910 to 0.939, exceeding the recommended threshold of 0.70 (Fornell and Larcker 1981). Convergent and discriminant validity were also evaluated. Standardized factor loadings for all items ranged from 0.811 to 0.925, and average variance extracted (AVE) values ranged from 0.726 to 0.837, exceeding the 0.50 criterion for convergent validity (Table 2). Discriminant validity was assessed using the Heterotrait-Monotrait Ratio of Correlations (HTMT), with all HTMT values falling below the recommended threshold of 0.85, supporting adequate discriminant validity (Henseler et al. 2015). As shown in Tables 2 and 3, the results collectively demonstrate satisfactory reliability and validity of the measures.

Factor loading, Cronbach’s alpha, composite reliability, and average variance extracted.

| Construct | Factor loading | Cronbach’s alpha | Composite reliability | AVE | |

|---|---|---|---|---|---|

| Trustworthiness | TR1 TR 2 TR 3 |

0.925 0.913 0.907 |

0.903 | 0.939 | 0.837 |

| Reliability | RE1 RE 2 RE 3 RE 4 RE 5 |

0.849 0.898 0.811 0.877 0.821 |

0.904 | 0.929 | 0.726 |

| Currency | CU1 CU2 CU3 |

0.881 0.871 0.882 |

0.851 | 0.910 | 0.771 |

| Topic coverage | TC1 TC2 TC3 |

0.864 0.891 0.884 |

0.853 | 0.911 | 0.774 |

| Info presentation | IP1 IP2 IP3 IP4 |

0.892 0.878 0.874 0.840 |

0.852 | 0.926 | 0.759 |

Heterotrait-monotrait ratio (HTMT) matrix for assessing discriminant validity of constructs.

| Variables | 1 | 2 | 3 | 4 | 5 |

|---|---|---|---|---|---|

| 1. Trustworthiness | – | 0.816 | 0.725 | 0.712 | 0.671 |

| 2. Reliability | – | – | 0.738 | 0.736 | 0.661 |

| 3. Currency | – | – | – | 0.747 | 0.690 |

| 4. Topic coverage | – | – | – | – | 0.702 |

| 5. Info presentation | – | – | – | – | – |

Given some relatively high factor loadings in Table 2, we assessed the potential for multicollinearity using variance inflation factor (VIF) values. Following Kline’s (2011) criterion, multicollinearity is typically considered problematic when tolerance values are below 0.10 or VIF values exceed 10. As shown in Table 4, all VIF values in this study ranged from 1.953 to 3.165, remaining comfortably within acceptable limits and indicating that multicollinearity was not a significant concern.

Collinearity diagnostics: tolerance and variance inflation factor (VIF) for variables.

| Construct | Tolerance | VIF | |

|---|---|---|---|

| Trustworthiness | TR1 TR 2 TR 3 |

0.316 0.352 0.370 |

3.165 2.841 2.703 |

| Reliability | RE1 RE 2 RE 3 RE 4 RE 5 |

0.394 0.302 0.472 0.355 0.467 |

2.538 3.311 2.119 2.817 2.141 |

| Currency | CU1 CU2 CU3 |

0.472 0.497 0.469 |

2.119 2.012 2.132 |

| Topic coverage | TC1 TC2 TC3 |

0.512 0.443 0.460 |

1.953 2.257 2.174 |

| Info presentation | IP1 IP2 IP3 IP4 |

0.325 0.347 0.394 0.453 |

3.077 2.882 2.538 2.208 |

4.3 Group Comparison

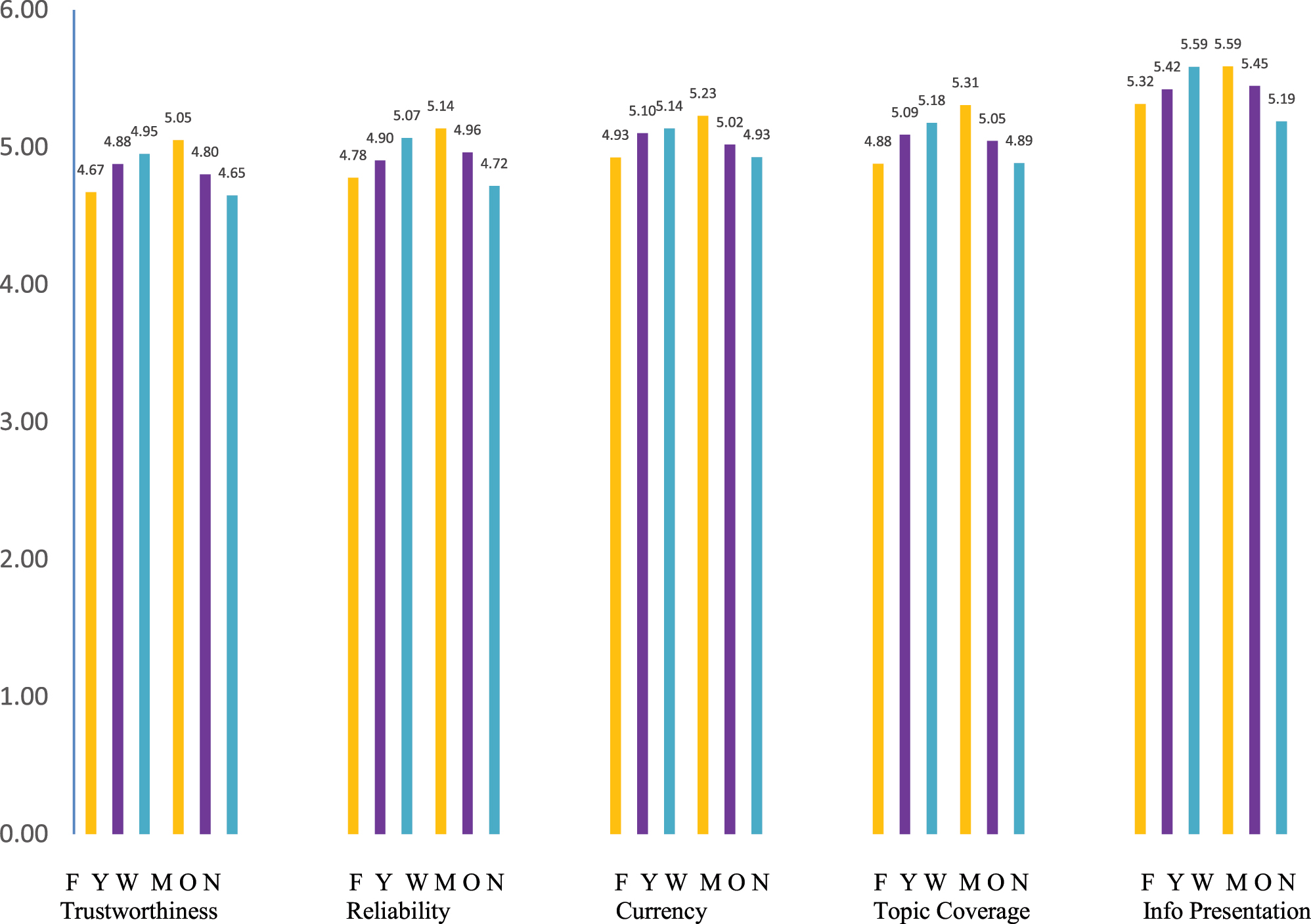

The overall perception of information quality of the five factors was calculated based on gender, age, and race (see Table 5). Both male and female participants rated the information quality of health information articles on Wikipedia as moderately positive (5.052 and 4.672 out of 7 points, respectively). Younger and older participants agreed that Wikipedia health information is trustworthy (4.878 and 4.803), reliable (4.904 and 4.963), current (5.102 and 5.019), comprehensive (5.091 and 5.045), and presented logically and easy to read (5.423 and 5.446). White participants had the highest score on information presentation (5.587), compared to non-White participants’ perceptions in any other information quality factor. For all five factors, male and White participants tended to rate the factors higher than females and non-White participants (Figure 2).

Mean and SD for information quality factors by gender, age, and race.

| Gender | Mean | SD | Age | Mean | SD | Race | Mean | SD | |

|---|---|---|---|---|---|---|---|---|---|

| Trustworthiness | Female | 4.672 | 1.193 | Younger | 4.878 | 1.232 | White | 4.953 | 1.158 |

| Male | 5.052 | 1.197 | Older | 4.803 | 1.189 | Non-White | 4.650 | 1.268 | |

| Reliability | Female | 4.779 | 1.134 | Younger | 4.904 | 1.093 | White | 5.068 | 0.994 |

| Male | 5.137 | 1.162 | Older | 4.963 | 1.098 | Non-White | 4.718 | 1.214 | |

| Currency | Female | 4.926 | 1.063 | Younger | 5.102 | 0.981 | White | 5.137 | 1.028 |

| Male | 5.229 | 1.158 | Older | 5.019 | 1.114 | Non-White | 4.929 | 1.084 | |

| Topic coverage | Female | 4.880 | 1.136 | Younger | 5.091 | 1.038 | White | 5.178 | 1.131 |

| Male | 5.307 | 1.178 | Older | 5.045 | 1.191 | Non-White | 4.885 | 1.085 | |

| Info presentation | Female | 5.316 | 1.122 | Younger | 5.423 | 1.076 | White | 5.587 | 0.968 |

| Male | 5.589 | 1.062 | Older | 5.446 | 1.069 | Non-White | 5.189 | 1.182 | |

| Overall info quality | Female | 4.915 | 0.923 | Younger | 5.080 | 0.932 | White | 5.185 | 0.925 |

| Male | 5.263 | 1.015 | Older | 5.055 | 1.019 | Non-White | 4.874 | 1.034 |

Means of information quality factors based on gender, age, and race. Note: Gender (yellow), age (purple), race (blue): F (female), Y (younger), W (white), M (male), O (older), and N (non-White).

4.4 MANOVA Results

Our finding indicated that the overall perceptions of Wikipedia users were significantly different by gender and race. Results of the MANOVA (Table 6) showed differences between the gender groups (F (9.564) = 10.290, p = 0.023) and racial groups (F (7.780) = 7.287, p = 0.006), while there were no differences between the age groups (F (0.050) = 0.048, p = 0.823), with p values significant at the 0.05 level. In the gender groups, male users perceived that the level of health information quality (5.263) on Wikipedia was higher than female users’ perception (4.915) for all five factors. In other words, male participants tended to perceive that the quality of health information in Wikipedia articles was higher based on trustworthiness, reliability, currency, topic coverage, and information presentation. In the race group, White users’ perception was higher than non-White users’ perception in trustworthiness, reliability, topic coverage, and information presentation. White users perceived Wikipedia information as more trustworthy, reliable, comprehensive, and organized compared to users from other racial groups. However, perceptions of currency did not differ significantly by race, suggesting that users across racial groups held similar views regarding how well Wikipedia information reflects current trends.

Multivariate analysis of variance (MANOVA) results for group differences across variables.

| Dependent variables | Sum of squares | df | Mean square | F | Sig. | |

|---|---|---|---|---|---|---|

| Gender | Trustworthiness | 11.410 | 1 | 11.410 | 7.991 | 0.005* |

| Reliability | 10.125 | 1 | 10.125 | 8.657 | 0.003* | |

| Currency | 7.211 | 1 | 7.211 | 6.616 | 0.011* | |

| Topic coverage | 14.383 | 1 | 14.383 | 11.839 | <0.001* | |

| Info presentation | 5.883 | 1 | 5.883 | 5.201 | 0.023* | |

| Overall info quality | 9.564 | 1 | 9.564 | 10.290 | 0.011* | |

| Age | Trustworthiness | 0.445 | 1 | 0.445 | 0.305 | 0.581 |

| Reliability | 0.274 | 1 | 0.274 | 0.228 | 0.633 | |

| Currency | 0.547 | 1 | 0.547 | 0.492 | 0.483 | |

| Topic coverage | 0.171 | 1 | 0.171 | 0.136 | 0.713 | |

| Info presentation | 0.041 | 1 | 0.041 | 0.035 | 0.851 | |

| Overall info quality | 0.048 | 1 | 0.048 | 0.050 | 0.823 | |

| Race | Trustworthiness | 6.936 | 1 | 6.936 | 4.810 | 0.029* |

| Reliability | 9.281 | 1 | 9.281 | 7.918 | 0.005* | |

| Currency | 3.284 | 1 | 3.284 | 2.980 | 0.085 | |

| Topic coverage | 6.462 | 1 | 6.462 | 5.213 | 0.023* | |

| Info presentation | 11.990 | 1 | 11.990 | 10.780 | 0.001* | |

| Overall info quality | 7.287 | 1 | 7.287 | 7.780 | 0.006* |

-

*p < 0.05.

Post hoc comparisons using the Tukey honestly significant difference (HSD) test revealed statistically significant pairwise differences by gender and race (p < 0.05), as shown in Table 7. These findings indicate that perceptions of information quality significantly differed by gender. Similarly, significant differences were observed between White and non-White participants across most dimensions of information quality, with the exception of currency (the extent to which information reflects current trends).

Post hoc comparisons among gender and racial groups using Tukey HSD test.

| Dependent variables | Mean difference | Standard error | 95 % CI | p | Significant pairwise differences | |

|---|---|---|---|---|---|---|

| Gender | Trustworthiness | −0.373 | 0.134 | [−0.534, −0.090] | 0.006* | Male > female |

| Reliability | −0.353 | 0.121 | [−0.549, −0.104] | 0.004* | Male > female | |

| Currency | −0.294 | 0.117 | [−0.503, −0.059] | 0.013* | Male > female | |

| Topic coverage | −0.422 | 0.124 | [−0.607, −0.161] | <0.001* | Male > female | |

| Info presentation | −0.265 | 0.119 | [−0.471, −0.028] | 0.027* | Male > female | |

| Race | Trustworthiness | 0.308 | 0.138 | [0.030, 0.483] | 0.026* | White > non-White |

| Reliability | 0.353 | 0.124 | [0.099, 0.553] | 0.005* | White > non-White | |

| Topic coverage | 0.294 | 0.128 | [0.038, 0.491] | 0.022* | White > non-White | |

| Info presentation | 0.404 | 0.121 | [0.156, 0.611] | <0.001* | White > non-White |

-

CI, confidence interval, *p < 0.05.

5 Discussion and Conclusion

The aim of this study was to investigate differences in the perceived quality of health information on Wikipedia based on gender, age, and racial identity. These factors are important because Wikipedia is often consulted for health information by a diverse population of readers. Given Wikipedia’s widespread use as a source for health information by a diverse readership, the examined factors hold significant weight. Without equitable representation among its editors and authors, the online encyclopedia risks failing to meet the unique and diverse information needs of its global users. Previous studies have examined the suitability of Wikipedia’s health content as viewed by various user groups, including consumer health users, medical students, and healthcare professionals. However, the findings of these studies have limitations, making it difficult to draw consistent conclusions. They often focus narrowly on specialized medical topics, suffer from low response rates, or are affected by the dynamic nature of Wikipedia’s medical and health content (Smith 2020). Moreover, few studies have explored perspectives across diverse demographic backgrounds, which motivated us to investigate these viewpoints more comprehensively.

In answering RQ1 related to the general perception of health information on Wikipedia, our findings reveal that both male and female participants had a positive assessment of the information quality in Wikipedia health articles. However, our analysis of RQ2, which sought to understand differences in information quality perception by age, gender and racial identity, produced more complicated findings. The first question on age differentiation did not result in any statistically significant differences. However, a statistically significant gender effect was observed in users’ perceptions of trustworthiness, reliability, currency, information presentation, and breadth of coverage. Males, in general, rated health information quality higher than females. Our results are supported by previous studies. Lim and Kwon (2010) found that among college students, males had higher perceptions of both Wikipedia’s information quality and an overall belief in the Wikipedia project. Their finding is likely related to the overrepresentation of males as Wikipedia contributors. This finding was not surprising as males are creating information that most interests this gender group.

Prior studies have reported significant gender imbalances in the context of Wikipedia, with only around 10 % female editors (Antin et al. 201l; Ford and Wajcman 2017) and an overrepresentation of males (Hargittai and Shaw 2015). Other factors contributing to this imbalance include the level of conflict involved in the editing process, confidence in expertise and value of contributions, and attitudes towards accepting criticism (Collier and Bear 2012). Hence, addressing gender differences in perceptions of health information quality on Wikipedia is a significant issue that calls attention to the potential for variations in the way people evaluate, trust, and use information on Wikipedia.

Lastly, regarding gender, our sociodemographic lens was particularly useful. Individuals who are White and male rated every category higher than females and non-White participants. Regarding our research question about racial identity and health information quality, White Wikipedians rated all factors, except currency, higher than non-Whites. These last two results suggest that there are two distinct perceptions of health information quality. Non-White Wikipedians have lower perceptions and thus have a different user experience that is likely somewhat related to trustworthiness and topic coverage. Ju and Stewart’s (2019) study of Black Wikipedians, for example, found that this population of users had concerns about the trustworthiness and topical coverage of the content related to their community and their experiences. For some Black American authors, for example, their contributions were seen as a “bulwark against information bias” in a repository that was seen as “overwhelmingly White.”

Another factor that may influence non-White Wikipedians’ lower perception of topical coverage is that in some instances, the information sought is not available. This finding is exemplified by the dermatological photographs in Wikipedia. Kim et al. (2021, n.p.) noted that “many of the Wikipedia pages [on skin diseases] often do not offer adequate photo representation” of diverse skin tones. Furthermore, Kim et al. (2021, n.p.) detailed that “many of the Wikipedia pages [on skin diseases] often do not offer adequate photo representation” of diverse skin tones. They emphasized that this lack of representation is problematic because “certain conditions such as melanoma, […] psoriasis, and acne can present visually differently in people with darker skin compared to people with lighted skin.” The absence of inclusive photographs means that people with darker skin tones are not only unable to effectively utilize Wikipedia as a health resource but also that Wikipedia becomes a barrier to health information for these populations.

Many health services and procedures are specific to gender, and other treatments may be impacted by one’s racial background. Thus, it is important for users to have equal levels of perceived quality in the health resources available to them, particularly in an everyday information repository like Wikipedia. Users’ perceptions of lower quality health information on Wikipedia may lead to further marginalization and invisibility when accessing the resources they need to make important health decisions.

This study has several implications for users and content developers on Wikipedia. First, new insights into gender and race-based differences in perceptions of health information on Wikipedia can help enhance the quality and credibility of the content and help ensure that Wikipedia is perceived by diverse audiences as trustworthy and reliable with adequate topical coverage. Second, increasing the participation of women and underrepresented populations as stakeholders in Wikipedia is a vital step towards promoting a more balanced representation of content and perspectives in the repository. The study’s findings emphasize the significance of considering users’ perceptions of information quality when utilizing online sources for research or other purposes, underscoring the importance of promoting gender diversity and inclusion on digital platforms.

6 Limitations and Future Endeavors

Despite the important findings, the current study has limitations. First, our focus is solely on the English version of Wikipedia so our findings are only applicable to the English edition. The French or Dutch versions of Wikipedia, for example, would represent different entities in terms of content, scope of topic coverage, and the number of available articles. Additionally, our study specifically targets health and medical articles on Wikipedia. Given Wikipedia’s extensive range of topics, there could be variations in the perception of information quality for different subjects.

Numerous studies have been conducted on Wikipedia readers and editors from non-nondominant communities and the English edition of the online encyclopedia. It would be fruitful to explore a deeper understanding of how minoritized users perceive information quality on the platform in both health and everyday information seeking contexts. For example, future researchers could design a quantitative study on Wikipedia content related to specific health topics that are critical to Asian, Black, and Latino/x populations, such as cancer (breast, prostate, colorectal), diabetes, heart disease, hypertension, and sickle cell anemia. Additionally, future studies could explore potential differences in health information-seeking behavior on Wikipedia across different languages and in culturally bilingual contexts like Canada. Such research could be extended to determine if our findings are applicable to Wikipedia health content in these contexts and how to improve diverse populations’ perceptions of the content quality, trustworthiness, and reliability of the articles on Wikipedia. Furthermore, we acknowledge that our approach of creating two broader racial categories limited our ability to observe more specific race-based patterns. A larger sample size or continued purposive sampling may have provided an opportunity to identify more race-specific differences.

-

Competing interest: None to declare.

-

Research funding: None to declare.

-

Data availability: The dataset used in this study is not publicly available, as participant consent did not include permission for data sharing with external parties.

Appendix – Questionnaire

What is your age?

18–19

20–29

30–39

40–49

50–59

60–65

What is your gender?

Male

Female

Other

What is your racial background?

American Indian/Native Alaskan

Asian/Pacific Islander

Black (non-Hispanic)

Hispanic

White (non-Hispanic)

Multiracial

Other

Other than Wikipedia, which online site do you use most frequently to meet your health information needs?

WebMD

Medline Plus

Health site (e.g., Mayo Clinic)

CDC (Centers for Disease Control and Prevention)

Other

How do you feel about the quality of health information in Wikipedia articles?

Trustworthiness

Reliability

Currency

Topic Coverage

Information Presentation

References

Antin, J., R. Yee, C. Cheshire, and O. Nov. 2011. “Gender Differences in Wikipedia Editing.” In Proceedings of the 7th International Symposium on Wikis and Open Collaboration, 11–4. San Francisco: ACM.10.1145/2038558.2038561Suche in Google Scholar

Baek, H., S. Lee, and S. Kim. 2021. “Are Female Users Equally Active? An Empirical Study of the Gender Imbalance in Korean Online News Commenting.” Telematics and Informatics 62: 101635. https://doi.org/10.1016/j.tele.2021.101635.Suche in Google Scholar

Bratland, K. M., C. Wien, and T. M. Sandanger. 2024. “Exploring Online Health Information Seeking Behaviour (OHISB) Among Young Adults: A Scoping Review Protocol.” BMJ Open 14 (1): e074894. https://doi.org/10.1136/bmjopen-2023-074894.Suche in Google Scholar

Colavizza, G. 2020. “COVID-19 Research in Wikipedia.” Quantitative Science Studies 1 (4): 1349–80. https://doi.org/10.1162/qss_a_00080Suche in Google Scholar

Collier, B., and J. Bear. 2012. “Conflict, Criticism, or Confidence: An Empirical Examination of the Gender Gap in Wikipedia Contributions.” In Proceedings of the ACM 2012 Conference on Computer Supported Cooperative Work, Seattle, Washington, February 11–15, 383–92. ACM.10.1145/2145204.2145265Suche in Google Scholar

Di Novi, C., M. Kovacic, and C. E. Orso. 2024. “Online Health Information Seeking Behavior, Healthcare Access, and Health Status During Exceptional Times.” Journal of Economic Behavior & Organization 220: 675–90. https://doi.org/10.1016/j.jebo.2024.02.032.Suche in Google Scholar

Eysenbach, G., J. Powell, O. Kuss, and E. R. Sa. 2002. “Empirical Studies Assessing the Quality of Health Information for Consumers on the World Wide Web: A Systematic Review.” Journal of the American Medical Association 287 (20): 2691–700. https://doi.org/10.1001/jama.287.20.2691.Suche in Google Scholar

Falk, M., H. Ford, K. Tall, and T. Pietsch. 2023. How Australians Are Represented in Wikipedia (No. 1). Sydney: University of Technology.Suche in Google Scholar

Farič, N., H. W. W. Potts, and J. M. Heilman. 2024. “Quality of Male and Female Medical Content on English-Language Wikipedia: Quantitative Content Analysis.” Journal of Medical Internet Research 26: e47562. https://doi.org/10.2196/47562.Suche in Google Scholar

Finney Rutten, L. J., K. D. Blake, A. J. Greenberg-Worisek, S. V. Allen, R. P. Moser, and B. W. Hesse. 2019. “Online Health Information Seeking Among US Adults: Measuring Progress Toward a Healthy People 2020 Objective.” Public Health Reports 134 (6): 617–25. https://doi.org/10.1177/0033354919874074.Suche in Google Scholar

Flanagin, A. J., and M. J. Metzger. 2011. “From Encyclopaedia Britannica to Wikipedia: Generational Differences in the Perceived Credibility of Online Encyclopedia Information.” Information, Communication & Society 14 (3): 355–74. https://doi.org/10.1080/1369118X.2010.542823.Suche in Google Scholar

Ford, H., and J. Wajcman. 2017. “‘Anyone Can Edit’, Not Everyone Does: Wikipedia’s Infrastructure and the Gender Gap.” Social Studies of Science 47 (4): 511–27. https://doi.org/10.1177/0306312717692172.Suche in Google Scholar

Fornell, C., and D. F. Larcker. 1981. “Evaluating Structural Equation Models with Unobserved Variables with Measurement Error.” Journal of Marketing Research 18 (1): 39–50. https://doi.org/10.1177/002224378101800104.Suche in Google Scholar

Giles, J. 2005. “Special Report Internet Encyclopedias Go Head to Head.” Nature 438 (15): 900–1. https://doi.org/10.10138/438900a.Suche in Google Scholar

Glott, R., P. Schmidt, and R. Ghosh. 2010. “Wikipedia Survey – Overview of Results.” http://www.ris.org/uploadi/editor/1305050082Wikipedia_Overview_15March2010-FINAL.pdf (accessed May 5, 2025).Suche in Google Scholar

Gosling, S. D., and W. Mason. 2015. “Internet Research in Psychology.” Annual Review of Psychology 66: 877–902. https://doi.org/10.1146/annurev-psych-010814-015321.Suche in Google Scholar

Hargittai, E., and A. Shaw. 2015. “Mind the Skills Gap: The Role of Internet Know-How and Gender in Differentiated Contributions to Wikipedia.” Information, Communication & Society 18 (4): 424–42. https://doi.org/10.1080/1369118X.2014.957711.Suche in Google Scholar

Heilman, J., E. Kemmann, M. Bonert, A. Chatterjee, B. Ragar, G. M. Beards, et al.. 2011. “Wikipedia: A Key Tool for Global Public Health Promotion.” Journal of Medical Internet Research 13 (1): e14. https://doi.org/10.2196/jmir.1589.Suche in Google Scholar

Henseler, J., C. M. Ringle, and M. Sarstedt. 2015. “A New Criterion for Assessing Discriminant Validity in Variance-Based Structural Equation Modeling.” Journal of the Academy of Marketing Science 43 (1): 115–35. https://doi.org/10.1007/s11747-014-0403-8.Suche in Google Scholar

Holmberg, K., and I. Hellsten. 2015. “Gender Differences in the Climate Change Communication on Twitter.” Internet Research 25 (5): 811–28. https://doi.org/10.1108/IntR-07-2014-0179.Suche in Google Scholar

Huisman, M., S. Joye, and D. Biltereyst. 2020. “To Share or Not to Share: An Explorative Study of Health Information Non-Sharing Behaviour Among Flemish Adults Aged Fifty and Over.” Information Research – An International Electronic Journal 25 (3). Paper 870. https://doi.org/10.47989/irpaper870.Suche in Google Scholar

Huisman, M., S. Joye, and D. Biltereyst. 2021. “Health on Wikipedia: A Qualitative Study of the Attitudes, Perceptions, and Use of Wikipedia as a Source of Health Information by Middle-Aged and Older Adults.” Information, Communication & Society 24 (12): 1797–813. https://doi.org/10.1080/1369118X.2020.1736125.Suche in Google Scholar

Jia, X., Y. Pang, and L. S. Liu. 2021. “Online Health Information Seeking Behavior: A Systematic Review.” Healthcare 9 (12): 1740. https://doi.org/10.3390/healthcare9121740.Suche in Google Scholar

Johnson, J. D. 1997. Cancer-Related Information Seeking. Hampton Press.Suche in Google Scholar

Ju, B., Y. Jung, and J. P. Bourgeois. 2024. “Wikipedia as an Online Health Information Source: Consumers’ Satisfaction with Information Quality.” Journal of Information Science Theory and Practice 12 (2): 36–48. https://doi.org/10.1633/JISTaP.2024.12.2.3.Suche in Google Scholar

Ju, B., and B. Stewart. 2019. “The Right Information’: Perceptions of Information Bias Among Black Wikipedians.” Journal of Documentation 75 (6): 1486–502. https://doi.org/10.1108/JD-02-2019-0031.Suche in Google Scholar

Ju, B., and J. B. Stewart. 2024. “Exploring Perceived Online Information Quality: A Mixed-Method Approach.” Journal of Documentation 80 (1): 239–54. https://doi.org/10.1108/JD-02-2023-0033.Suche in Google Scholar

Katerattanakul, P., and K. Siau. 2008. “Factors Affecting the Information Quality of Personal Web Portfolios.” Journal of the American Society for Information Science and Technology 59 (1): 63–76. https://doi.org/10.1002/asi.20717.Suche in Google Scholar

Kim, W., S. M. Wolfe, C. Zagona-Prizio, and R. P. Dellavalle. 2021. “Skin of Color Representation on Wikipedia: Cross-Sectional Analysis.” JMIR Dermatology 4 (2): e27802. https://doi.org/10.2196/27802.Suche in Google Scholar

Kircaburun, K. 2016. “Effects of Gender and Personality Differences on Twitter Addiction Among Turkish Undergraduates.” Journal of Education and Practice 7 (24): 33–42. https://www.iiste.org/Journals/index.php/JEP/article/view/32597/33488 (accessed May 20, 2025).Suche in Google Scholar

Kline, R. B. 2011. Principles and Practice of Structural Equation Modeling, 3rd ed. Guilford Press.Suche in Google Scholar

Koo, C., Y. Wati, K. Park, and M. K. Lim. 2011. “Website Quality, Expectation, Confirmation, and End User Satisfaction: The Knowledge-Intensive Website of the Korean National Cancer Information Center.” Journal of Medical Internet Research 13 (4): e81. https://doi.org/10.2196/jmir.1574.Suche in Google Scholar

Küchler, C., A. Stoll, M. Ziegele, and T. K. Naab. 2023. “Gender-Related Differences in Online Comment Sections: Findings from a Large-Scale Content Analysis of Commenting Behavior.” Social Science Computer Review 41 (3): 728–47. https://doi.org/10.1177/08944393211052042.Suche in Google Scholar

Lam, S. K., A. Uduwage, Z. Dong, S. Sen, D. R. Musicant, L. Terveen, et al.. 2011. “WP: Clubhouse? An Exploration of Wikipedia’s Gender Imbalance.” In Proceedings of the 7th International Symposium on Wikis and Open Collaboration, 1–10. New York: ACM.10.1145/2038558.2038560Suche in Google Scholar

Langrock, I., and S. González-Bailón. 2022. “The Gender Divide in Wikipedia: Quantifying and Assessing the Impact of Two Feminist Interventions.” Journal of Communication 72 (3): 297–321. https://doi.org/10.1093/job/jqac004.Suche in Google Scholar

Lee, S. M., P. Katerattanakul, and S. Hong. 2007. “Framework for user Perception of Effective e-tail Web Sites.” In Utilizing and Managing Commerce and Services Online, 288–312. IGI Global Scientific Publishing.10.4018/978-1-59140-932-8.ch014Suche in Google Scholar

Lemieux, M. E., R. Zhang, and F. Tripodi. 2023. “Too Soon’ to Count? How Gender and Race Cloud Notability Considerations on Wikipedia.” Big Data & Society 10 (1). https://doi.org/10.1177/20539517231165490.Suche in Google Scholar

Lewis, T. 2006. “Seeking Health Information on the Internet: Lifestyle Choice or Bad Attack of Cyberchondria?” Media, Culture & Society 28 (4): 521–39. https://doi.org/10.1177/0163443706065027.Suche in Google Scholar

Lewis, N., N. Shekter-Porat, and H. Nasir. 2021. “Health Information Seeking.” In The Routledge Handbook of Health Communication, edited by N. Lewis, N. Shekter-Porat, and H. Nasir, 399–411. Routledge.10.4324/9781003043379-34Suche in Google Scholar

Li, H., D. Li, M. Zhai, L. Lin, and Z. Cao. 2025. “Associations Among Online Health Information Seeking Behavior, Online Health Information Perception, and Health Service Utilization: Cross-Sectional Study.” Journal of Medical Internet Research 27: e66683. https://doi.org/10.2196/66683.Suche in Google Scholar

Lim, S., and N. Kwon. 2010. “Gender Differences in Information Behavior Concerning Wikipedia, an Unorthodox Information Source?” Library & Information Science Research 32 (3): 212–20. https://doi.org/10.1016/j.lisr.2010.01.003.Suche in Google Scholar

Lir, S. A. 2021. “Strangers in a Seemingly Open-to-All Website: The Gender Bias in Wikipedia.” Equality, Diversity and Inclusion: An International Journal 40 (7): 801–18. https://doi.org/10.1108/EDI-10-2018-0198.Suche in Google Scholar

Liu, D., S. Yang, C. Y. Cheng, L. Cai, and J. Su. 2024. “Online Health Information Seeking, eHealth Literacy, and Health Behaviors Among Chinese Internet Users: Cross-Sectional Survey Study.” Journal of Medical Internet Research 26: e54135. https://doi.org/10.2196/54135.Suche in Google Scholar

Ma, X., Y. Liu, P. Zhang, R. Qi, and F. Meng. 2023. “Understanding Online Health Information Seeking Behavior of Older Adults: A Social Cognitive Perspective.” Frontiers in Public Health 11: 1147789. https://doi.org/10.3389/fpubh.2023.1147789.Suche in Google Scholar

Mandiberg, M. 2023. “Wikipedia’s Race and Ethnicity Gap and the Unverifiability of Whiteness.” Social Text 41 (1): 21–46. https://doi.org/10.1215/01642472-10174954.Suche in Google Scholar

Mesgari, M., C. Okoli, M. Mehdi, F. Å. Nielsen, and A. Lanamäki. 2015. “The Sum of All Human Knowledge’: A Systematic Review of Scholarly Research on the Content of Wikipedia.” Journal of the Association for Information Science and Technology 66 (2): 219–45. https://doi.org/10.1002/asi.23172.Suche in Google Scholar

Miller, L. M. S., and R. A. Bell. 2012. “Online Health Information Seeking: The Influence of Age, Information Trustworthiness, and Search Challenges.” Journal of Aging and Health 24 (3): 525–41. https://doi.org/10.1177/0898264311428167.Suche in Google Scholar

Mothe, J., and G. Sahut. 2018. “How Trust in Wikipedia Evolves: A Survey of Students Aged 11 to 25.” Information Research 23 (1). Paper 783.Suche in Google Scholar

National Cancer Institute. 2025. Health Information National Trends Survey (HINTS). https://hints.cancer.gov/data/download-data.aspx#H7 (accessed May 25, 2025).Suche in Google Scholar

Nesi, J., and M. J. Prinstein. 2015. “Using Social Media for Social Comparison and Feedback-Seeking: Gender and Popularity Moderate Associations with Depressive Symptoms.” Journal of Abnormal Child Psychology 43: 1427–38. https://doi.org/10.1007/s10802-015-0020-0.Suche in Google Scholar

Renahy, E., I. Parizot, and P. Chauvin. 2010. “Determinants of the Frequency of Online Health Information Seeking: Results of a Web-Based Survey Conducted in France in 2007.” Informatics for Health and Social Care 35 (1): 25–39. https://doi.org/10.3109/17538150903358784.Suche in Google Scholar

Rieh, S. Y., and N. J. Belkin. 1998. “Understanding Judgment of Information Quality and Cognitive Authority in the WWW.” In Proceedings of the 61st Annual Meeting of the American Society for Information Science, Vol. 35, 279–89.Suche in Google Scholar

Rogova, G. L., and E. Bosse. 2010. “Information Quality in Information Fusion.” In 2010 13th International Conference on Information Fusion, 1–8. IEEE.10.1109/ICIF.2010.5711976Suche in Google Scholar

Schaul, K., S. Y. Chen, and N. Tiku. 2023. “Inside the Secret List of Websites that Make AI like ChatGPT Sound Smart.” Washington Post, April 19. https://www.washingtonpost.com/technology/interactive/2023/ai-chatbot-learning/ (accessed October 1, 2025).Suche in Google Scholar

Shafee, T., G. Masukume, L. Kipersztok, D. Das, M. Häggström, and J. Heilman. 2017. “Evolution of Wikipedia’s Medical Content: Past, Present and Future.” Journal of Epidemiology & Community Health 71 (11): 1122–9. https://doi.org/10.1136/jech-2016-208601.Suche in Google Scholar

Similarweb. 2025a. Most Visited Websites in Canada. Similarweb. https://www.similarweb.com/top-websites/canada/ (accessed October 1, 2025).Suche in Google Scholar

Similarweb. 2025b. Most Visited Websites in United States. Similarweb. https://www.similarweb.com/top-websites/united-states/ (accessed October 1, 2025).Suche in Google Scholar

Smith, D. A. 2020. “Situating Wikipedia as a Health Information Resource in Various Contexts: A Scoping Review.” PLoS One 15 (2): e0228786. https://doi.org/10.1371/journal.pone.0228786.Suche in Google Scholar

Smith, D. A. 2023. ““I’m Comfortable with it”: User Stories of Health Information on Wikipedia.” First Monday 28 (8). https://doi.org/10.5210/fm.v28i8.12897.Suche in Google Scholar

Starcevic, V. 2024. “The Impact of Online Health Information Seeking on Patients, Clinicians, and Patient-Clinician Relationship.” Psychotherapy and Psychosomatics 93 (2): 80–4. https://doi.org/10.1159/000538149.Suche in Google Scholar

Stevens, J. P. 2002. Applied Multivariate Statistics for the Social Sciences. Lawrence Erlbaum Associates.10.4324/9781410604491Suche in Google Scholar

Stewart, B., and B. Ju. 2020. “On Black Wikipedians: Motivations Behind Content Contribution.” Information Processing & Management 57 (3). https://doi.org/10.1016/j.ipm.2019.102134.Suche in Google Scholar

Stvilia, B., L. Mon, and Y. J. Yi. 2009. “A Model for Online Consumer Health Information Quality.” Journal of the American Society for Information Science and Technology 60 (9): 1781–91. https://doi.org/10.1002/asi.21115.Suche in Google Scholar

Tripodi, F. 2023. “Ms. Categorized: Gender, Notability, and Inequality on Wikipedia.” New Media & Society 25 (7): 1687–707. https://doi.org/10.1177/14614448211023772.Suche in Google Scholar

Wang, R. Y., and D. M. Strong. 1996. “Beyond Accuracy: What Data Quality Means to Data Consumers.” Journal of Management Information Systems 12 (4): 5–33. https://doi.org/10.1080/07421222.1996.11518099.Suche in Google Scholar

Wang, X., J. Shi, and H. Kong. 2021. “Online Health Information Seeking: A Review and Meta-analysis.” Health Communication 36 (10): 1163–75. https://doi.org/10.1080/10410236.2020.1748829.Suche in Google Scholar

Weinberg, J. D., J. Freese, and D. McElhattan. 2014. “Comparing Data Characteristics and Results of an Online Factorial Survey Between a Population-Based and a Crowdsource-Recruited Sample.” Sociological Science 1: 292–310. https://doi.org/10.15195/v1.a19.Suche in Google Scholar

© 2025 the author(s), published by De Gruyter, Berlin/Boston

This work is licensed under the Creative Commons Attribution 4.0 International License.

Artikel in diesem Heft

- Frontmatter

- Best Student Research Paper Award

- Predatory Publishing and the Policing of Academic Peripheries

- Articles

- The Challenge of Academic Integrity in the Age of Generative Artificial Intelligence

- Perceived Impact of Procrastination on Academic Performance Among Students and the Role of AI Tools

- Everyday Parenting Information-Seeking Behavior of Mothers in Rural China

- Theoretical Building for Knowledge Sharing on Agricultural Nonprofit Websites

- Perceived Quality of Health Information on Wikipedia: A Sociodemographic Perspective

- Typology and Characteristics of Motivational Styles of Students Taking Librarian Courses in Japan

Artikel in diesem Heft

- Frontmatter

- Best Student Research Paper Award

- Predatory Publishing and the Policing of Academic Peripheries

- Articles

- The Challenge of Academic Integrity in the Age of Generative Artificial Intelligence

- Perceived Impact of Procrastination on Academic Performance Among Students and the Role of AI Tools

- Everyday Parenting Information-Seeking Behavior of Mothers in Rural China

- Theoretical Building for Knowledge Sharing on Agricultural Nonprofit Websites

- Perceived Quality of Health Information on Wikipedia: A Sociodemographic Perspective

- Typology and Characteristics of Motivational Styles of Students Taking Librarian Courses in Japan